The Human Genome Project

Larry H Bernstein, MD, FCAP, Curator

Leaders in Pharmaceutical Intelligence

Series E. 2; 2.12

Craig Venter, Francis Collins

Francis Sellers Collins (born April 14, 1950) is an American physician–geneticist noted for his discoveries of disease genes and his leadership of the Human Genome Project. He is director of the National Institutes of Health (NIH) in Bethesda, Maryland.

Before being appointed director of the NIH, Collins led the Human Genome Project and other genomics research initiatives as director of the National Human Genome Research Institute (NHGRI), one of the 27 institutes and centers at NIH. Before joining NHGRI, he earned a reputation as a gene hunter at the University of Michigan. He has been elected to the Institute of Medicine and the National Academy of Sciences, and has received the Presidential Medal of Freedom and the National Medal of Science.

Collins also has written a number of books on science, medicine, and spirituality, including the New York Times bestseller, The Language of God: A Scientist Presents Evidence for Belief.

After leaving the helm of NHGRI and before becoming director of the NIH, he founded and served as president of The BioLogos Foundation, which promotes discourse on the relationship between science and religion and advocates the perspective that belief in Christianity can be reconciled with acceptance of evolution and science, especially through the advancement of evolutionary creation.[1] In 2009, Pope Benedict XVI appointed Collins to the Pontifical Academy of Sciences.

Collins was born in Staunton, Virginia, the youngest of four sons of Fletcher Collins and Margaret James Collins. Raised on a small farm in Virginia’s Shenandoah Valley, Collins was home schooled until the sixth grade.[2] He attended Robert E. Lee High School in Staunton, Virginia. Through most of his high school and college years he aspired to be a chemist, and he had little interest in what he then considered the “messy” field of biology. What he referred to as his “formative education” was received at the University of Virginia, where he earned a Bachelor of Science in Chemistry in 1970. He earned a Doctor of Philosophy in Physical Chemistry at Yale University in 1974. While at Yale, a course in biochemistry sparked his interest in the subject. After consulting with his mentor from the University of Virginia, Carl Trindle, he changed fields and enrolled in medical school at the University of North Carolina at Chapel Hill, earning an Doctor of Medicine there in 1977.

At Yale, Collins worked under the direction of Sherman Weissman, and in 1984 the two published a paper, “Directional Cloning of DNA Fragments at a Large distance From an Initial Probe: a Circularization Method”.[3] The method described was named chromosome jumping, to emphasize the contrast with an older and much more time-consuming method of copying DNA fragments called chromosome walking.[4]

Collins joined the University of Michigan faculty in 1984, rising to the rank of professor in internal medicine and human genetics. His gene-hunting approach, which he named “positional cloning”,[5][6] developed into a powerful[7] component of modern molecular genetics.

Several scientific teams worked in the 1970s and 1980s to identify genes and their loci as a cause of cystic fibrosis. Progress was modest until 1985, when Lap-Chee Tsui and colleagues at Toronto’s Hospital for Sick Children identified the locus for the gene.[8] It was then determined that a shortcut was needed to speed the process of identification, so Tsui contacted Collins, who agreed to collaborate with the Toronto team and share his chromosome-jumping technique. The gene was identified in June 1989,[9][10] and the results were published in the journal Science on Sept. 8, 1989.[11] This identification was followed by other genetic discoveries made by Collins and a variety of collaborators. They included isolation of the genes for Huntington’s disease,[12] neurofibromatosis,[13][14] multiple endocrine neoplasia type 1,[15] and Hutchinson-Gilford Progeria Syndrome.[16]

In 1993, National Institutes of Health Director Bernadine Healy appointed Collins to succeed James D. Watson as director of the National Center for Human Genome Research, which became National Human Genome Research Institute (NHGRI) in 1997. As director, he oversaw the International Human Genome Sequencing Consortium,[17] which was the group that successfully carried out the Human Genome Project.

In 1994, Collins founded NHGRI’s Division of Intramural Research,[18] a collection of investigator-directed laboratories that conduct genome research on the NIH campus.

In June 2000, Collins was joined by President Bill Clinton and biologist Craig Venter in making the announcement of a working draft of the human genome.[19] He stated that “It is humbling for me, and awe-inspiring to realize that we have caught the first glimpse of our own instruction book, previously known only to God.”[20][21][22] An initial analysis was published in February 2001, and scientists worked toward finishing the reference version of the human genome sequence by 2003, coinciding with the 50th anniversary of James D. Watson and Francis Crick‘s publication of the structure of DNA.

Another major activity at NHGRI during his tenure as director was the creation of the haplotype map of the human genome. This International HapMap Project produced a catalog of human genetic variations—called single-nucleotide polymorphisms—which is now being used to discover variants correlated with disease risk. Among the labs engaged in that effort is Collins’ own lab at NHGRI, which has sought to identify and understand the genetic variations that influence the risk of developing type 2 diabetes.

In addition to his basic genetic research and scientific leadership, Collins is known for his close attention to ethical and legal issues in genetics. He has been a strong advocate for protecting the privacy of genetic information and has served as a national leader in securing the passage of the federal Genetic Information and Nondiscrimination Act, which prohibits gene-based discrimination in employment and health insurance.[23] In 2013, spurred by concerns over the publication of the genome of the widely used HeLa cell line derived from the late Henrietta Lacks, Collins and other NIH leaders worked with the Lacks family to reach an agreement to protect their privacy, while giving researchers controlled access to the genomic data.[24]

Building on his own experiences as a physician volunteer in a rural missionary hospital in Nigeria,[25] Collins is also very interested in opening avenues for genome research to benefit the health of people living in developing nations. For example, in 2010, he helped establish an initiative called Human Heredity and Health in Africa (H3Africa)[26] to advance African capacity and expertise in genomic science.

Collins announced his resignation from NHGRI on May 28, 2008, but has continued to maintain an active lab there.[27]

While leading the National Human Genome Research Institute, Collins was elected to the Institute of Medicine and the National Academy of Sciences. He was a Kilby International Awards recipient in 1993, and he received the Biotechnology Heritage Award with J. Craig Venter in 2001.[45][46] He received the William Allan Award from the American Society of Human Genetics in 2005. In 2007, he was presented with thePresidential Medal of Freedom.[47] In 2008, he was awarded the Inamori Ethics Prize[48] and National Medal of Science.[49] In the same year, Collins won the Trotter Prize where he delivered a lecture called “The Language of God”.

Francis Collins: The Scientist as Believer

http://ngm.nationalgeographic.com/ngm/0702/voices.html

The often strained relationship between science and religion has become particularly combative lately. In one corner we have scientists such as Richard Dawkins and Steven Pinker who view religion as a relic of our superstitious, prescientific past that humanity should abandon. In the other corner are religious believers who charge that science is morally nihilistic and inadequate for understanding the wonders of existence. Into this breach steps Francis Collins, who offers himself as proof that science and religion can be reconciled. As leader of the Human Genome Project, Collins is among the world’s most important scientists, the head of a multibillion-dollar research program aimed at understanding human nature and healing our innate disorders. And yet in his best-selling book,The Language of God, he recounts how he accepted Christ as his savior in 1978 and has been a devout Christian ever since. “The God of the Bible is also the God of the genome,” he writes. “He can be worshiped in the cathedral or in the laboratory.” Recently Collins discussed his faith with science writer John Horgan, who has explored the boundaries between science and spirituality in his own books The End of Science and Rational Mysticism. Horgan, who has described himself as “an agnostic increasingly disturbed by religion’s influence on human affairs,” directs the Center for Science Writings at the Stevens Institute of Technology in Hoboken, New Jersey.

Interview by John Horgan

Horgan: As a scientist who looks for natural explanations of things and demands evidence, how can you also believe in miracles, like the resurrection?

Collins: I don’t have a problem with the concept that miracles might occasionally occur at moments of great significance, where there is a message being transmitted to us by God Almighty. But as a scientist I set my standards for miracles very high.

Horgan: The problem I have with miracles is not just that they violate what science tells us about how the world works. They also make God seem too capricious. For example, many people believe that if they pray hard enough God will intercede to heal them or a loved one. But does that mean that all those who don’t get better aren’t worthy?

Collins: In my own experience as a physician, I have not seen a miraculous healing, and I don’t expect to see one. Also, prayer for me is not a way to manipulate God into doing what we want him to do. Prayer for me is much more a sense of trying to get into fellowship with God. I’m trying to figure out what I should be doing rather than telling Almighty God what he should be doing. Look at the Lord’s Prayer. It says, “Thy will be done.” It wasn’t, “Our Father who art in Heaven, please get me a parking space.”

Horgan: I must admit that I’ve become more concerned lately about the harmful effects of religion because of religious terrorism like 9/11 and the growing power of the religious right in the United States.

Collins: What faith has not been used by demagogues as a club over somebody’s head? Whether it was the Inquisition or the Crusades on the one hand or the World Trade Center on the other? But we shouldn’t judge the pure truths of faith by the way they are applied any more than we should judge the pure truth of love by an abusive marriage. We as children of God have been given by God this knowledge of right and wrong, this Moral Law, which I see as a particularly compelling signpost to his existence. But we also have this thing called free will, which we exercise all the time to break that law. We shouldn’t blame faith for the ways people distort it and misuse it.

Horgan: Many people have a hard time believing in God because of the problem of evil. If God loves us, why is life filled with so much suffering?

Collins: That is the most fundamental question that all seekers have to wrestle with. First of all, if our ultimate goal is to grow, learn, and discover things about ourselves and things about God, then unfortunately a life of ease is probably not the way to get there. I know I have learned very little about myself or God when everything is going well. Also, a lot of the pain and suffering in the world we cannot lay at God’s feet. God gave us free will, and we may choose to exercise it in ways that end up hurting other people.

Horgan: Physicist Steven Weinberg, who is an atheist, asks why six million Jews, including his relatives, had to die in the Holocaust so that the Nazis could exercise their free will.

Collins: If God had to intervene miraculously every time one of us chose to do something evil, it would be a very strange, chaotic, unpredictable world. Free will leads to people doing terrible things to each other. Innocent people die as a result. You can’t blame anyone except the evildoers for that. So that’s not God’s fault. The harder question is when suffering seems to have come about through no human ill action. A child with cancer, a natural disaster, a tornado or tsunami. Why would God not prevent those things from happening?

Horgan: Some philosophers, such as Charles Hartshorne, have suggested that maybe God isn’t fully in control of his creation. The poet Annie Dillard expresses this idea in her phrase “God the semi-competent.”

Collins: That’s delightful—and probably blasphemous! An alternative is the notion of God being outside of nature and time and having a perspective of our blink-of-an-eye existence that goes both far back and far forward. In some admittedly metaphysical way, that allows me to say that the meaning of suffering may not always be apparent to me. There can be reasons for terrible things happening that I cannot know.

Horgan: I’m an agnostic, and I was bothered when in your book you called agnosticism a “cop-out.” Agnosticism doesn’t mean you’re lazy or don’t care. It means you aren’t satisfied with any answers for what after all are ultimate mysteries.

Collins: That was a put-down that should not apply to earnest agnostics who have considered the evidence and still don’t find an answer. I was reacting to the agnosticism I see in the scientific community, which has not been arrived at by a careful examination of the evidence. I went through a phase when I was a casual agnostic, and I am perhaps too quick to assume that others have no more depth than I did.

Horgan: Free will is a very important concept to me, as it is to you. It’s the basis for our morality and search for meaning. Don’t you worry that science in general and genetics in particular—and your work as head of the Genome Project—are undermining belief in free will?

Collins: You’re talking about genetic determinism, which implies that we are helpless marionettes being controlled by strings made of double helices. That is so far away from what we know scientifically! Heredity does have an influence not only over medical risks but also over certain behaviors and personality traits. But look at identical twins, who have exactly the same DNA but often don’t behave alike or think alike. They show the importance of learning and experience—and free will. I think we all, whether we are religious or not, recognize that free will is a reality. There are some fringe elements that say, “No, it’s all an illusion, we’re just pawns in some computer model.” But I don’t think that carries you very far.

Horgan: What do you think of Darwinian explanations of altruism, or what you call agape, totally selfless love and compassion for someone not directly related to you?

Collins: It’s been a little of a just-so story so far. Many would argue that altruism has been supported by evolution because it helps the group survive. But some people sacrificially give of themselves to those who are outside their group and with whom they have absolutely nothing in common. Such as Mother Teresa, Oskar Schindler, many others. That is the nobility of humankind in its purist form. That doesn’t seem like it can be explained by a Darwinian model, but I’m not hanging my faith on this.

Horgan: What do you think about the field of neurotheology, which attempts to identify the neural basis of religious experiences?

Collins: I think it’s fascinating but not particularly surprising. We humans are flesh and blood. So it wouldn’t trouble me—if I were to have some mystical experience myself—to discover that my temporal lobe was lit up. That doesn’t mean that this doesn’t have genuine spiritual significance. Those who come at this issue with the presumption that there is nothing outside the natural world will look at this data and say, “Ya see?” Whereas those who come with the presumption that we are spiritual creatures will go, “Cool! There is a natural correlate to this mystical experience! How about that!”

Horgan: Some scientists have predicted that genetic engineering may give us superhuman intelligence and greatly extended life spans, perhaps even immortality. These are possible long-term consequences of the Human Genome Project and other lines of research. If these things happen, what do you think would be the consequences for religious traditions?

Collins: That outcome would trouble me. But we’re so far away from that reality that it’s hard to spend a lot of time worrying about it, when you consider all the truly benevolent things we could do in the near term.

Horgan: I’m really asking, does religion require suffering? Could we reduce suffering to the point where we just won’t need religion?

Collins: In spite of the fact that we have achieved all these wonderful medical advances and made it possible to live longer and eradicate diseases, we will probably still figure out ways to argue with each other and sometimes to kill each other, out of our self-righteousness and our determination that we have to be on top. So the death rate will continue to be one per person, whatever the means. We may understand a lot about biology, we may understand a lot about how to prevent illness, and we may understand the life span. But I don’t think we’ll ever figure out how to stop humans from doing bad things to each other. That will always be our greatest and most distressing experience here on this planet, and that will make us long the most for something more.

Policy: NIH plans to enhance reproducibility

Francis S. Collins& Lawrence A. Tabak

A growing chorus of concern, from scientists and laypeople, contends that the complex system for ensuring the reproducibility of biomedical research is failing and is in need of restructuring1, 2. As leaders of the US National Institutes of Health (NIH), we share this concern and here explore some of the significant interventions that we are planning.

Science has long been regarded as ‘self-correcting’, given that it is founded on the replication of earlier work. Over the long term, that principle remains true. In the shorter term, however, the checks and balances that once ensured scientific fidelity have been hobbled. This has compromised the ability of today’s researchers to reproduce others’ findings.

Let’s be clear: with rare exceptions, we have no evidence to suggest that irreproducibility is caused by scientific misconduct. In 2011, the Office of Research Integrity of the US Department of Health and Human Services pursued only 12 such cases3. Even if this represents only a fraction of the actual problem, fraudulent papers are vastly outnumbered by the hundreds of thousands published each year in good faith.

Instead, a complex array of other factors seems to have contributed to the lack of reproducibility. Factors include poor training of researchers in experimental design; increased emphasis on making provocative statements rather than presenting technical details; and publications that do not report basic elements of experimental design4. Crucial experimental design elements that are all too frequently ignored include blinding, randomization, replication, sample-size calculation and the effect of sex differences. And some scientists reputedly use a ‘secret sauce’ to make their experiments work — and withhold details from publication or describe them only vaguely to retain a competitive edge5. What hope is there that other scientists will be able to build on such work to further biomedical progress?

Exacerbating this situation are the policies and attitudes of funding agencies, academic centres and scientific publishers. Funding agencies often uncritically encourage the overvaluation of research published in high-profile journals. Some academic centres also provide incentives for publications in such journals, including promotion and tenure, and in extreme circumstances, cash rewards6.

Then there is the problem of what is not published. There are few venues for researchers to publish negative data or papers that point out scientific flaws in previously published work. Further compounding the problem is the difficulty of accessing unpublished data — and the failure of funding agencies to establish or enforce policies that insist on data access.

Nature special: Challenges in irreproducible research

Preclinical problems

Reproducibility is potentially a problem in all scientific disciplines. However, human clinical trials seem to be less at risk because they are already governed by various regulations that stipulate rigorous design and independent oversight — including randomization, blinding, power estimates, pre-registration of outcome measures in standardized, public databases such as ClinicalTrials.gov and oversight by institutional review boards and data safety monitoring boards. Furthermore, the clinical trials community has taken important steps towards adopting standard reporting elements7.

Preclinical research, especially work that uses animal models1, seems to be the area that is currently most susceptible to reproducibility issues. Many of these failures have simple and practical explanations: different animal strains, different lab environments or subtle changes in protocol. Some irreproducible reports are probably the result of coincidental findings that happen to reach statistical significance, coupled with publication bias. Another pitfall is overinterpretation of creative ‘hypothesis-generating’ experiments, which are designed to uncover new avenues of inquiry rather than to provide definitive proof for any single question. Still, there remains a troubling frequency of published reports that claim a significant result, but fail to be reproducible.

Proposed NIH actions

As a funding agency, the NIH is deeply concerned about this problem. Because poor training is probably responsible for at least some of the challenges, the NIH is developing a training module on enhancing reproducibility and transparency of research findings, with an emphasis on good experimental design. This will be incorporated into the mandatory training on responsible conduct of research for NIH intramural postdoctoral fellows later this year. Informed by this pilot, final materials will be posted on the NIH website by the end of this year for broad dissemination, adoption or adaptation, on the basis of local institutional needs.

“Efforts by the NIH alone will not be sufficient to effect real change in this unhealthy environment.”

Several of the NIH’s institutes and centres are also testing the use of a checklist to ensure a more systematic evaluation of grant applications. Reviewers are reminded to check, for example, that appropriate experimental design features have been addressed, such as an analytical plan, plans for randomization, blinding and so on. A pilot was launched last year that we plan to complete by the end of this year to assess the value of assigning at least one reviewer on each panel the specific task of evaluating the ‘scientific premise’ of the application: the key publications on which the application is based (which may or may not come from the applicant’s own research efforts). This question will be particularly important when a potentially costly human clinical trial is proposed, based on animal-model results. If the antecedent work is questionable and the trial is particularly important, key preclinical studies may first need to be validated independently.

Informed by feedback from these pilots, the NIH leadership will decide by the fourth quarter of this year which approaches to adopt agency-wide, which should remain specific to institutes and centres, and which to abandon.

The NIH is also exploring ways to provide greater transparency of the data that are the basis of published manuscripts. As part of our Big Data initiative, the NIH has requested applications to develop a Data Discovery Index (DDI) to allow investigators to locate and access unpublished, primary data (see go.nature.com/rjjfoj). Should an investigator use these data in new work, the owner of the data set could be cited, thereby creating a new metric of scientific contribution unrelated to journal publication, such as downloads of the primary data set. If sufficiently meritorious applications to develop the DDI are received, a funding award of up to three years in duration will be made by September 2014. Finally, in mid-December, the NIH launched an online forum called PubMed Commons (see go.nature.com/8m4pfp) for open discourse about published articles. Authors can join and rate or contribute comments, and the system is being evaluated and refined in the coming months. More than 2,000 authors have joined to date, contributing more than 700 comments.

Community responsibility

Clearly, reproducibility is not a problem that the NIH can tackle alone. Consequently, we are reaching out broadly to the research community, scientific publishers, universities, industry, professional organizations, patient-advocacy groups and other stakeholders to take the steps necessary to reset the self-corrective process of scientific inquiry. Journals should be encouraged to devote more space to research conducted in an exemplary manner that reports negative findings, and should make room for papers that correct earlier work.

Related stories

We are pleased to see that some of the leading journals have begun to change their review practices. For example, Nature Publishing Group, the publishers of this journal, announced8 in May 2013 the following: restrictions on the length of methods sections have been abolished to ensure the reporting of key methodological details; authors use a checklist to facilitate the verification by editors and reviewers that critical experimental design features have been incorporated into the report, and editors scrutinize the statistical treatment of the studies reported more thoroughly with the help of statisticians. Furthermore, authors are encouraged to provide more raw data to accompany their papers online.

Similar requirements have been implemented by the journals of the American Association for the Advancement of Science — Science Translational Medicine in 2013 and Science earlier this month9 — on the basis of, in part, the efforts of the NIH’s National Institute of Neurological Disorders and Stroke to increase the transparency of how work is conducted10.

Perhaps the most vexed issue is the academic incentive system. It currently over-emphasizes publishing in high-profile journals. No doubt worsened by current budgetary woes, this encourages rapid submission of research findings to the detriment of careful replication. To address this, the NIH is contemplating modifying the format of its ‘biographical sketch’ form, which grant applicants are required to complete, to emphasize the significance of advances resulting from work in which the applicant participated, and to delineate the part played by the applicant. Other organizations such as the Howard Hughes Medical Institute have used this format and found it more revealing of actual contributions to science than the traditional list of unannotated publications. The NIH is also considering providing greater stability for investigators at certain, discrete career stages, utilizing grant mechanisms that allow more flexibility and a longer period than the current average of approximately four years of support per project.

In addition, the NIH is examining ways to anonymize the peer-review process to reduce the effect of unconscious bias (see go.nature.com/g5xr3c). Currently, the identifiers and accomplishments of all research participants are known to the reviewers. The committee will report its recommendations within 18 months.

Efforts by the NIH alone will not be sufficient to effect real change in this unhealthy environment. University promotion and tenure committees must resist the temptation to use arbitrary surrogates, such as the number of publications in journals with high impact factors, when evaluating an investigator’s scientific contributions and future potential.

The recent evidence showing the irreproducibility of significant numbers of biomedical-research publications demands immediate and substantive action. The NIH is firmly committed to making systematic changes that should reduce the frequency and severity of this problem — but success will come only with the full engagement of the entire biomedical-research enterprise.

Craig Venter, PhD

- Craig Venter, Ph.D., is regarded as one of the leading scientists of the 21st century for his numerous invaluable contributions to genomic research. He is founder, chairman, and CEO of the J. Craig Venter Institute (JCVI), a not-for-profit, research organization with approximately 250 scientists and staff dedicated to human, microbial, plant, synthetic and environmental genomic research, and the exploration of social and ethical issues in genomics.

Dr. Venter is co-founder, executive chairman and co-chief scientist of Synthetic Genomics Inc (SGI), a privately held company focused on developing products and solutions using synthetic genomics technologies.

Dr. Venter is also a co-founder, executive chairman and CEO of Human Longevity Inc (HLI), a San Diego-based genomics and cell therapy-based diagnostic and therapeutic company focused on extending the healthy, high performance human life span.

Dr. Venter began his formal education after a tour of duty as a Navy Corpsman in Vietnam from 1967 to 1968. After earning both a Bachelor’s degree in Biochemistry and a Ph.D. in Physiology and Pharmacology from the University of California at San Diego, he was appointed professor at the State University of New York at Buffalo and the Roswell Park Cancer Institute. In 1984, he moved to the National Institutes of Health campus where he developed Expressed Sequence Tags or ESTs, a revolutionary new strategy for rapid gene discovery. In 1992 Dr. Venter founded The Institute for Genomic Research (TIGR, now part of JCVI), a not-for-profit research institute, where in 1995 he and his team decoded the genome of the first free-living organism, the bacterium Haemophilus influenzae, using his new whole genome shotgun technique.

In 1998, Dr. Venter founded Celera Genomics to sequence the human genome using new tools and techniques he and his team developed. This research culminated with the February 2001 publication of the human genome in the journal, Science. He and his team at Celera also sequenced the fruit fly, mouse and rat genomes.

Dr. Venter and his team at JCVI continue to blaze new trails in genomics. They have sequenced and analyzed hundreds of genomes, and have published numerous important papers covering such areas as environmental genomics, the first complete diploid human genome, and the groundbreaking advance in creating the first self- replicating bacterial cell constructed entirely with synthetic DNA.

Dr. Venter is one of the most frequently cited scientists, and the author of more than 280 research articles. He is also the recipient of numerous honorary degrees, public honors, and scientific awards, including the 2008 United States National Medal of Science, the 2002 Gairdner Foundation International Award, the 2001 Paul Ehrlich and Ludwig Darmstaedter Prize and the King Faisal International Award for Science. Dr. Venter is a member of numerous prestigious scientific organizations including the National Academy of Sciences, the American Academy of Arts and Sciences, and the American Society for Microbiology.

http://www.jcvi.org/cms/about/bios/jcventer/0#sthash.tojhbWtd.dpuf

In 2001, Craig Venter made headlines for sequencing the human genome. In 2003, he started mapping the ocean’s biodiversity. And now he’s created the first synthetic lifeforms — microorganisms that can produce alternative fuels.

Craig Venter, the man who led the private effort to sequence the human genome, is hard at work now on even more potentially world-changing projects.

First, there’s his mission aboard the Sorcerer II, a 92-foot yacht, which, in 2006, finished its voyage around the globe to sample, catalouge and decode the genes of the ocean’s unknown microorganisms. Quite a task, when you consider that there are tens of millions of microbes in a single drop of sea water. Then there’s the J. Craig Venter Institute, a nonprofit dedicated to researching genomics and exploring its societal implications.

In 2005, Venter founded Synthetic Genomics, a private company with a provocative mission: to engineer new life forms. Its goal is to design, synthesize and assemble synthetic microorganisms that will produce alternative fuels, such as ethanol or hydrogen. He was on Time magzine’s 2007 list of the 100 Most Influential People in the World.

https://www.ted.com/speakers/craig_venter

https://www.ted.com/talks/craig_venter_on_dna_and_the_sea

https://www.ted.com/talks/craig_venter_is_on_the_verge_of_creating_synthetic_life

https://www.ted.com/talks/craig_venter_unveils_synthetic_life

http://www.technologyreview.com/news/529601/three-questions-for-j-craig-venter/

What’s clear is that genome research and data science are coming together in new ways, and at a much larger scale than ever before. We asked Venter why.

How are we doing in genomics?

In my view there have not been a significant number of advances. One reason for that is that genomics follows a law of very big numbers. I’ve had my genome for 15 years, and there’s not much I can learn because there are not that many others to compare it to.

Why did you hire an expert in machine translation as your top data scientist?

Until now, there’s not been software for comparing my genome to your genome, much less to a million genomes. We want to get to a point where it takes a few seconds to compare your genome to all the others. It’s going to take a lot of work to do that.

Google Translate started as a slow algorithm that took hours or days to run and was not very accurate. But Franz [Och] built a machine-learning version that could go out on the Web and find every article translated from German to English or vice versa, and learn from those. And then it was optimized, so it works in milliseconds.

I convinced Franz, and he convinced himself, that understanding the human genome at the scale that we are trying to do it is going to be one of the greatest translation challenges in history.

How is discovering the connection between genes and disease like translating languages?

Everything in a cell derives from your DNA code, all the proteins, their structure, whether they last seconds or days. All that is preprogrammed in DNA language. Then it is translated into life. People are going to be very surprised about how much of a DNA software species we are.

WHAT IS LIFE? A 21st CENTURY PERSPECTIVE

On the 70th Anniversary of Schroedinger’s Lecture at Trinity College by J. Craig Venter [7.12.12]

Introduction

by John Brockman

Several weeks ago, I received the following message from Craig Venter:

“John,

“I would like to extend an invitation for you to join me in Dublin, Ireland the week of July 10 during which one of the landmark events of 20th Century science will be celebrated and reinterpreted for the 21st Century as part of the Science in the City program of Euroscience Open Forum 2012 (ESOF 2012). This unique event will connect an important episode in Ireland’s scientific heritage with the frontier of contemporary research.

“In February 1943 one of the most distinguished scientists of the 20th Century, Erwin Schrödinger, delivered a seminal lecture, entitled ‘What is Life?’, under the auspices of the Dublin Institute for Advanced Studies, in Trinity College, Dublin. The then Prime Minister, Éamon de Valera, attended the lecture and an account of it featured in the 5 April 1943, issue of Timemagazine.

“The lecture presented far-sighted ideas on how hereditary information could be encoded in a chemical structure (aperiodic crystal) in living cells. Schrödinger’s book (1944) of the same title is considered to be a scientific classic. The book was cited by Crick and Watson as one of the inspirations which ultimately led them to unravel the structure of DNA in 1953, a breakthrough which won them the Nobel prize. Recent advances in genetics and synthetic biology mean that it is now timely to reconsider the fundamental question posed by Schrödinger 70 years ago. I have been asked to revisit Schrödinger’s question and will do so in a lecture entitled “What is Life? A 21st century perspective”; on the evening of Thursday, July 12 at the Examination Hall in Trinity College Dublin.”

Never one to turn down an interesting invitation, I was able to organize an interesting week beginning with an Edge Dinner in Turin, in honor of Venter, Brian Eno and myself, where Venter, in an after-dinner talk, began to publicly present some of the new ideas he would flesh out in his Dublin talk.

Then on to Dublin, where I sat in the front row at Examination Hall next to Jim Watson and Irish Prime Minister (the “Taoiseach) Enda Kenny for Venter’s lecture. At it’s conclusion, the two legendary scientists, Watson and Venter, shook hands on stage, as Watson congratulated Venter for “a beautiful lecture”. Schrödinger to Watson to Venter: It was an historic moment.

WHAT IS LIFE? A 21st CENTURY PERSPECTIVE

- CRAIG VENTER: I was asked earlier whether the goal is to dissect what Schrödinger had spoken and written, or to present the new summary, and I always like to be forward-looking, so I won’t give you a history lesson except for very briefly. I will present our findings on first on reading the genetic code, and then learning to synthesize and write the genetic code, and as many of you know, we synthesized an entire genome, booted it up to create an entirely new synthetic cell where every protein in the cell was based on the synthetic DNA code.

As you all know, Schrödinger’s book was published in 1944 and it was based on a series of three lectures here, starting in February of 1943. And he had to repeat the lectures, I read, on the following Monday because the room on the other side of campus was too small, and I understand people were turned away tonight, but we’re grateful for Internet streaming, so I don’t have to do this twice.

Also, due clearly to his historical role, and it’s interesting to be sharing this event with Jim Watson, who I’ve known and had multiple interactions with over the last 25 years, including most recently sharing the Double Helix Prize for Human Genome Sequencing with him from Cold Spring Harbor Laboratory a few years ago.

Schrödinger started his lecture with a key question and an interesting insight on it. The question was “How can the events in space and time, which take place within the boundaries of a living organism be accounted for by physics and chemistry?” It’s a pretty straightforward, simple question. Then he answered what he could at the time, “The obvious inability of present-day physics and chemistry to account for such events is no reason at all for doubting that they will be accounted for by those sciences.” While I only have around 40 minutes, not three lectures, I hope to convince you that there has been substantial progress in the last nearly 70 years since Schrödinger initially asked that question, to the point where the answer is at least nearly at hand, if not in hand.

I view that we’re now in what I’m calling “The Digital Age of Biology”. My teams work on synthesizing genomes based on digital code in the computer, and four bottles of chemicals illustrates the ultimate link between the computer code and the digital code.

Life is code, as you heard in the introduction, was very clearly articulated by Schrodinger as code script. Perhaps even more importantly, and something I missed on the first few readings of his book earlier in my career, was as far as I could tell, it’s the first mention that this code could be as simple as a binary code. And he used the example of how the Morse code with just dots and dashes, could be sufficient to give 34 different specifications. I’ve searched and I have not found any earlier references to the Morse code, although an historian that I know wrote Crick a letter asking about that, and Crick’s response was, “It was a metaphor that was obvious to everybody.” I don’t know if it was obvious to everybody after Schrodinger’s book, or some time before.

One of the things, though, Schrodinger was right about a lot of things, which is why, in fact, we celebrate what he talked about and what he wrote about, but some things he was clearly wrong about, like most scientists in his time, he relied on the biologist of the day. They thought that protein, not DNA was the genetic information. It’s really quite extraordinary because just in 1944 in the same year that he published his book is when the famous experiment by Oswald Avery, who was 65 and about ready to retire, along with this colleagues, Colin MacLeod and Maclyn McCarty, published their key paper demonstrating that DNA was the substance that causes bacterial transformation, and therefore was the genetic material.

This experiment was remarkably simple, and I wonder why it wasn’t done 50 years earlier with all the wonderful genetics work going on with drosophila, and chromosomes. Avery simply used proteolytic enzymes to destroy all the proteins associated with the DNA, and showed that the DNA, the naked DNA was, in fact, a transforming factor. The impact of this paper was far from instantaneous, as has happened in this field, in part because there was so much bias against DNA and for its proteins that it took a long time for them to sink in.

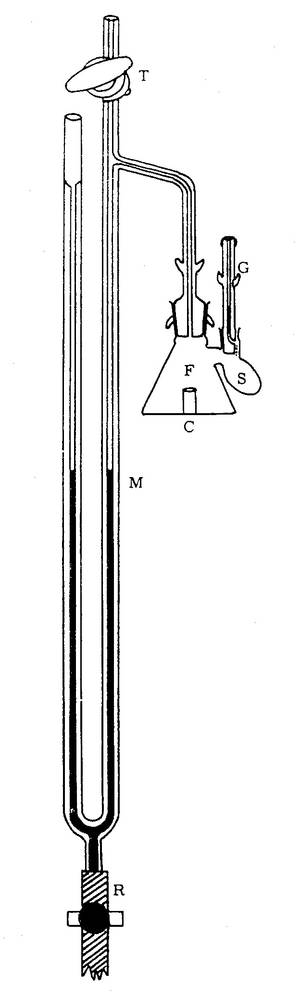

[Enlarge]

In 1949, was the first sequencing of a protein by Fred Sanger, and the protein was insulin. This work showed, in fact, that proteins consisted of linear amino acid codes. Sanger won the Nobel Prize in 1958 for his achievement. The sequence of insulin was very key in terms of leading to understanding the link between DNA and proteins. But as you heard in the introduction, obviously the discovery that changed the whole field and started us down the DNA route was the 1953 work by Watson and Crick with help from Maurice Wilkins and Rosalind Franklin showing that DNA was, in fact, a double helix which provided a clear explanation of how DNA could be self-replicated. Again, this was not, as I understand, instantly perceived as a breakthrough because of the bias of biochemists who were still hanging on to proteins as the genetic material. But soon with a few more experiments from others, the world began to change pretty dramatically.

The next big thing came from the work of Gobind Khorana and Marshall Nirenberg in 1961 where they worked out the triplet genetic code. It’s three letters of genetic code, coding for each amino acid. With this breakthrough it became clear how the linear DNA code had coded for the linear protein code. This was followed a few years later by Robert Holley’s discovery of the structure of tRNA, and tRNA is the key link between the messenger RNA and bringing in the amino acids in for protein synthesis. Holley, Nirenberg and Khorana shared the Nobel Prize in 1968 for their work.

[Enlarge] [Englarge]

The next decade brought us restriction enzymes from my friend and colleague, Ham Smith, who is at the Venter Institute now; in 1970 discovered the first restriction enzymes. These are the molecular scissors that cut DNA very precisely, and enabled the entire field of molecular engineering and molecular biology. Ham Smith, and Warner Arber, and Dan Nathans shared the Nobel prize in 1978 for their work. The ’70s brought not only some interesting dress codes and characters, (Laughter) but the beginning of the molecular splicing revolution, using restriction enzymes, Cohen and Boyer, and Paul Berg all published the first papers on recombinant DNA, and Cohen and Boyer at Stanford filed a patent on their work, and this was used by Genentech and Eli Lilly to produce a human insulin as the first recombinant drug.

DNA sequencing and reading the genetic code progressed much more slowly. In 1973 Maxam and Gilbert published a paper on only 24 base pairs, 24 letters of genetic code. RNA sequencing progressed a little bit faster, so the first actual genome, a viral genome, an RNA viral genome was sequenced in 1976 by Walter Fiers from Belgium. This was followed by Fred Sanger’s sequencing the first DNA virus, Phi X 174. This became the first viral DNA genome, and it was also accompanied by a new DNA sequencing technique of dideoxy DNA sequencing, now referred to as “Sanger sequencing” that Sanger introduced.

This is a picture of Sanger’s team that sequenced Phi X 174. The second guy from the left on the bottom is Clyde Hutchison, who is also a member of the Venter Institute, and joined us after retiring from the University of North Carolina, and played a key role in some of the synthetic genome work.

In 1975 I was just getting my PhD as the first genes were being sequenced. Twenty years later I led the team to sequence the first genome of living species, and Ham Smith was part of that team. This was Haemophilus influenzae. Instead of 5,000 letters of genetic code, this was 1.8 million letters of genetic code. Or about 300 times the size of the Phi X genome. Five years later, we upped the ante another 1,600 times with the first draft of the human genome using our whole genome shotgun technique.

I view DNA as an analogue coding molecule, and when we sequence the DNA, we are converting that analogue code into digital code; the 1s and 0s in the computer are very similar to the dots and dashes of Schrodinger’s metaphor. I call this process “digitizing biology”.

The human genome is about a half a million times larger the Phi X genome, so it shows how fast things were developing. Reading genomes has now progressed extremely rapidly from requiring years or decades, it now takes about two hours to sequence a human genome. Instead of genomes per day or genomes per hour, or hours per genome, we can now and have recently done a demonstration sequencing 2,000 complete microbial genomes in one machine run. The pace is changing quite substantially.

I view DNA as an analogue coding molecule, and when we sequence the DNA, we are converting that analogue code into digital code; the 1s and 0s in the computer are very similar to the dots and dashes of Schrodinger’s metaphor. I call this process “digitizing biology”.

[Enlarge]

Numerous scientists have drawn the analogy between computers and biology. I take these even further. I describe DNA as the software of life and when we activate a synthetic genome in a recipient cell I describe it as booting up a genome, the same way we talk about booting up a software in a computer.

June 23rd of this year would have been Alan Turing’s 100th birthday. Turing described what has become to be known as Turing Machines. The machine described a set of instructions written on a tape. He also described the Universal Turing Machine, which was a machine that could take that set of instructions and rewrite them, and this was the original version of the digital computer. His ideas were carried further in the 1940s by John von Neumann, and as many people know he conceived of the self-replicating machine. Von Neumann’s machine consisted of a series of cells that uncovered a sequence of actions to be performed by the machine, and using the writing head, the machine can print out a new pattern of cells, allowing it to make a complete copy of itself on the tape. Many scientists have made the obvious analogy between Turing machines and biology. The latest was most recently in nature by Sydney Brenner who played a role in almost all the early stages of molecular biology. Brenner wrote an article about Turing and biology, and in this he argued that the best examples of Turing and von Neumann machines are from biology with the self-replicating code, the internal description of itself, and how this is the key kernel of biological theory.

While software was pouring out of sequencing machines around the world, substantial progress was going on describing the hardware of life, or proteins. In biochemistry the first two decades of the 20th century was dominated by what was called “The colloid theory”. Life itself was explained in terms of the aggregate properties of all the colloidal substances in an organism. We now know that the substances are a collection of three-dimensional protein machines. Each evolved to carry out a very specific task.

Now, these proteins have been described as nature’s robots. If you think about it for every single task in the cell, every imaginable task as described by Tanford and Reynolds, “there is a unique protein to carry out that task. It’s programmed when to go on, when to go off. It does this based on its structure. It doesn’t have consciousness; it doesn’t have a control from the mind or higher center. Everything a protein does is built into its linear code, derived from the DNA code”.

[Enlarge]

There are multiple protein types, everything you know about your own life, and most of it is protein-derived. About a quarter of your body is collagen, it’s a matrix protein just built up of multiple layers. We have rubber-like proteins that form blood vessels as well as the lung tissue, and we have transporters that move things in and out of cells, and enzymes that copy DNA, metabolize sugars, et cetera.

[Movie]

The most important breakthroughs, outside of the genetic code, was in determining the process of protein synthesis. To show you how recent all of this is, this is the three-dimensional structure of the bacterial ribosome determined in 2005, and the three-angstrom structure of the eukaryotic chromosome, which was just determined and published in December of last year. These ribosomes are extraordinary molecules. They are the most complex machinery we have in the cell, as you can see there are numerous components to it. I try to think of this as maybe the Ferrari engine of the cell. If the engine can’t convert the messenger RNA tape into proteins, there is no life. If you interfere with that process, you kill life. It’s the major antibiotics that we all know about, the amino glycosides, tetracycline, chloramphenicol, erythromycin, et cetera, all kill bacterial cells by interfering with the function of the ribosome.

The ribosome is clearly the most unique and special structure in the cell. It has seven major RNA chains, including three tRNA chains and one messenger RNA. It has 47 different proteins going into the structure and one newly synthesized protein chain, and a size of several million Daltons. This is the heart of all biology. We would not have cells, we would not have life without this machine that converts the linear DNA code into proteins working.

The process of converting the DNA code into protein starts with the synthesis of mRNA from DNA, called “transcription”, and protein synthesis from the mRNA is called “translation”. If these processes were highly reliable, life would be very different, and perhaps we would not need the same kind of information-driven system. If you were building a factory to build automobiles that worked the way the ribosome did, you would be out of business very quickly. A significant fraction of all the proteins synthesized, are degraded shortly after synthesis, because they formed the wrong confirmations and they aggregate in the cell, or cause some other problem.

Transfer RNA brings in the final amino acid to a growing peptide chain coming out of the ribosome. The next step is truly one of the most remarkable in nature, and that’s the self-folding of the proteins. The number of potential protein confirmations is enormous: if you have 100 amino acids in a protein then there are on the order of 2 to the 100th power different conformations, and it would take about ten to the tenth years to try each conformation. But built into the linear protein code with each amino acid are the folding instructions in turn determined by the linear genetic code. As a result these processes happen very quickly.

[Movie]

Here’s a movie that spreads out 6 microseconds of protein folding over several seconds, to show you folding of a small protein. This is the end folded structure that starts with a linear protein, and over 6 microseconds it goes through all the different confirmations to try and get to the final fold.

Somehow the linear amino acid code limits the number of possible folds it can take, but each protein tries a large number of different ones, and if it gets them wrong in the end, the protein has to be degraded very quickly or it will cause problems. Imagine all the evolutionary selection that went into these processes, because the protein structure determines its rate of folding, as well as the final structure and hence its function. In fact, the end terminal amino acid determines how fast a protein is degraded. This is now called “The “N” rule pathway for protein degradation”. For example, if you had the amino acid, lysine, or arginine or tryptophan as the N terminal on a protein beta-galactosidase, it results in a protein with a half-life of 120 seconds in E. coli, or 180 seconds in a eukaryotic cell, yeast. Whereas you have three different amino acids, serine, valine or methionine, you get a half-life of over ten hours of in bacteria, over 30 hours in yeast.

Because of the instability, aggregation and turnover of proteins in a cell one of the most important pathways in any cell is the proteolytic pathway. Degradation of proteins and protein fragments is of vital importance as they can be highly toxic to the cell by forming intracellular aggregates.

A bacterial cell in an hour or less will have to remake of all its proteins. Our cells make proteins at a similar rate, but because of protein instability and the random folding and misfolding of proteins, protein aggregation is a key problem. We have to constantly synthesize new proteins, and if they fold wrong, you have to get rid of them or they clog up the cell, the same way as if you stop taking out the trash in a city everything comes to a halt. Cells work the same way. You have to degrade the proteins; you have to pump the trash out of the cell. Miss folded proteins can become toxic very quickly. There are a number of diseases known to most of you that are due to misfolding or aggregation, Alzheimer’s and mad cow disease are examples of diseases caused by the accumulation of toxic protein aggregates.

Life is a process of dynamic renewal. We’re all shedding about 500 million skin cells every day. That is the dust that accumulates in your home; that’s you. You shed your entire outer layer of skin every two to four weeks. You have five times ten to the 11th blood cells that die every day. If you’re not constantly synthesizing new cells, you die.

Several human diseases arise from protein misfolding leaving too little of the normal protein to do its job properly. The most common hereditary disease of this type is cystic fibrosis. Recent research has clearly shown that the many, previously mysterious symptoms of this disorder all derive from lack of a protein that regulates the transport of the chloride ion across the cell membrane. More recently scientists have shown that by far the most common mutation underlying cystic fibrosis hinders the dissociation of the transport regulator protein from one of its chaperones. Thus, the final steps in normal folding cannot occur, and normal amounts of active protein are not produced.

I’m trying to leave you with a notion that life is a process of dynamic renewal. We’re all shedding about 500 million skin cells every day. That is the dust that accumulates in your home; that’s you. You shed your entire outer layer of skin every two to four weeks. You have five times ten to the 11th blood cells that die every day. If you’re not constantly synthesizing new cells, you die. During normal organ development about half of all of our cells die. Everything in life is constantly turning over and being renewed by rereading the DNA software and making new proteins.

Life is a process of dynamic renewal. Without our DNA, without the software of life cells die very rapidly. Rapid protein turnover is not just an issue for bacterial cells; our 100 trillion human cells are constantly reading the genetic code and producing proteins. A recent study assaying 100 proteins in living human cancer cells showed half-lives that ranged between 45 minutes and 22.5 hours.

[Enlarge]

Now, as you know, all life is cellular, and the cellular theory of life is that you can only get life from preexisting cells, and all kinds of special vitalistic parameters have been attributed to cells over time. This slide shows what an artist’s view of what a cell cytoplasm might look like. It’s quite a crowded place, it’s relatively viscous, it’s not this empty bag with a few proteins floating around in it, and it’s a very unique environment. About one quarter of the proteins are in solid phase.

A key tenant of chemistry is the notion of “synthesis as proof”. This perhaps dates back to 1828 when Friedrich Wöhler synthesized urea. The Wöhler synthesis is of great historical significance because for the first time an organic compound was produced from inorganic reactants. Wöhler synthesis of urea was heralded as the end of vitalism because vitalists thought that you could only get organic molecules from living entities. Today there are tens of thousands of scientific papers published that either have “proof by synthesis” as the starting point or as a key part of the title.

We decided to take the approach of proof by synthesis that DNA codes for everything a cell produces and does. Back in 1995 when we sequenced the first genome, we sequenced a second genome that year. For comparative purposes we were looking for the cell with the smallest genome, and we chose mycoplasma genitalium. It has 482 protein-coding genes, and 43 RNA genes. We asked simple questions, how many of these genes are essential for life?; what’s the smallest number of cells needed for a cellular machinery? After extensive experimentation we ultimately decided that the only way to answer these questions would be to design and construct a minimal DNA genome of the cell. As soon as we started down that route, we had new questions. Would chemistry even allow us to synthesize a bacterial chromosome? And if we could, would we just have a large piece of DNA, or could we boot it up in the cell like a new chemical piece of software?

We decided to start where DNA history started, with Phi X 174. We chose it as a test synthesis because you can make very few changes in the genetic code of Phi X without destroying viral particles. Clyde Hutchison, who helped sequence Phi X in Sanger’s lab, Ham Smith and I developed a new series of techniques to correct the errors that take place when you synthesize DNA. The machines that synthesize DNA are not particularly accurate; the longer the piece of DNA you make, the more spelling errors. We had to find ways to correct errors.

[Enlarge]

Starting with the digital code we synthesized DNA fragments and assembled the genome. We corrected the errors and in the end had a 5,386-basepair piece of DNA that we inserted into E. coli, and this is the actual photo of what happened. The E. coli recognized the synthetic piece of DNA as normal DNA, and the proteins, being robots, just started reading the synthetic genetic code, because that’s what they’re programmed to do. They made what the DNA code told them to do, to make the viral proteins. The virus proteins self-assembled and formed a functional virus. The virus showed its gratitude by killing the cells, which is how we effectively get these clear plaques in a lawn of bacterial cells. I call this a situation where the “software is building its own hardware”. All we did was put a piece of DNA software in the cell, and we got out a protein virus with a DNA core.

Now, our goal is not to make viruses, we wanted to make something substantially larger. We wanted to make an entire chromosome of a living cell. But we thought if we could make viral-sized chromosomes accurately, maybe we could make 100 or so of those and find a way to put them together. That’s what we did.

The teams starting with synthetic segments the size of Phi-X, we sequentially assembled larger and larger segments. At each stage we sequenced verified them before going on to the next assembly stage. We put four pieces together creating segments that were 24,000 letters long. We would clone the segments in E. coli, sequence them, and assemble three together, to get pieces that were 72,000 base pairs. This was a very laborious process that took about a year and a half to do.

We put two of the 72,000bp segments together to obtain new segments representing one quarter of the genome each at 144,000 letters in length. This was way beyond the largest piece that had ever been synthesized by other of only 30,000 base pairs. E. coli didn’t like these large pieces of synthetic DNA in them, so we switched to yeast. I found out last night this is a city that loves beer that is produced from the same brewer’s yeast. Aside from fermentation, this little cell has remarkable properties of assembling DNA.

[Enlarge]

All we had to do was put the four synthetic quarter molecules into yeast with a small synthetic yeast centromere, and yeast automatically assembled these pieces together. That gave us the first synthetic bacterial chromosome, and this is what we published in 2008. This was the largest chemical of a defined structure ever synthesized.

We continued to work on DNA synthesis, and somebody who started out as a young post-doc, Dan Gibson, came up with a substantial breakthrough. Instead of the hours, to days, to years, he found out that by putting three enzymes together with all the DNA fragments in one tube at 50 degrees centigrade for a little while, they would automatically assemble these pieces. DNA assembly went from days down to an hour. This was a breakthrough for a number of reasons. Most importantly, it allows us now to automate genome synthesis. Having a simple one-step method allows us to go from the digital code in the computer to the analogue code of DNA in a robotic fashion. This means scaleing up substantially. We proved this initially by just one step synthesizing the mouse mitochondrial genome.

I had two teams working, one on the chemistry and one on the biology. It turns out the biology ended up being more difficult than the chemistry. How do you boot up a synthetic chromosome in a cell? This took substantial time to work out, and this paper that we published in 2007 is one of the most important for understanding how cells work and what the future of this field brings.

This paper is where we describe genome transplantation, and how by simply changing the genetic code, the chromosome, in one cell, swapping it out for another, we converted one species into another. Because this is so important to the theme of what we’re doing, I’m going to walk you through this a little bit. And by the way, these are two of the scientists pictured here that led this effort, Carole Lartigue and John Glass, and the team working with them.

[Enlarge] [Enlarge]

We started by isolating the chromosome from a cell called M. mycoides. Chromosomes are enshrouded with proteins, which is why there was confusion for so many years over whether the proteins or the DNA was the genetic material. We simply did what Avery did, we treated the DNA with proteolytic enzymes, removing all the proteins because if we’re making a synthetic chromosome, we need to know can naked DNA work on its own, or are there going to be some special proteins needed for transplantation? We added a couple of gene cassettes to the chromosome, one so we could select for it, and another so it turns cells bright blue if it gets activated. After considerable effort we found a way to transplant this genome into a recipient cell, a cell M. capricolum, which is about the same distance apart genetically from M. mycoides as we are from mice. So relatively close, on the order of 10 percent or more different.

Life is based on DNA software. We’re a DNA software system, you change the DNA software, and you change the species. It’s a remarkably simple concept, remarkably complex in its execution.

[Enlarge]

Let me show you what happened with this very sophisticated movie. We inserted the M. mycoides chromosome into the recipient cell. Just as with the Phi X, as soon as we put this DNA into this cell, the protein robots started producing mRNA, started producing proteins. Some of the early proteins produced were the restriction enzymes that Ham Smith discovered in 1970, we think that they recognized the initial chromosome in the cell as foreign DNA and chewed it up. Now we have the body and all the proteins of one species, and the genetic software of another. What happened?

[Enlarge]

In a very short period of time we have these bright blue cells. When we interrogated the cells, they had only the transplanted genome, but more importantly, when we sequenced the proteins in these cells, there wasn’t a single protein or other molecule from the original species. Every protein in the cell came from the new DNA that we inserted into the cell. Life is based on DNA software. We’re a DNA software system, you change the DNA software, and you change the species. It’s a remarkably simple concept, remarkably complex in its execution.

Now, we had a problem, that some of you may have picked up on or have read about. We were assembling the bacterial chromosome in a eukaryotic cell. If we’re going to take the synthetic genome and do the transplantations, we had to find a way to get the genome out of yeast to transplant it back into the bacterial cell. We developed a whole new way to grow bacteria chromosomes in yeast as eukaryotic chromosomes. It was remarkably simple in the end. All we do is add a very small synthetic centromere from yeast to the bacterial chromosome, and all of a sudden it turns into a stable eukaryotic chromosome.

[Enlarge] [Enlarge]

Now we can stabley grow bacterial chromosomes in yeast. We had the situation where we had the M. mycoides chromosome in the eukaryotic cell, we could try isolating it and doing a transplantation. The trouble is, it didn’t work. This little problem took us two and a half years to solve of why it didn’t work. It turns out when we initially did the transplantations taking the chromosome out of the M. mycoides cell, that DNA had been methylated, and that’s how cells protect their own DNA from interloping species. We proved this by isolating the six methylases, and methylating the DNA when we took it out of yeast. If we methylated the DNA, we could then do the transplantation. We proved this ultimately by in the recipient cell removing the restriction enzyme system, and in that case we can just transplant the naked unmethylated DNA because there’s nothing to destroy the DNA in the cell.

[Enlarge]

We were now at the point where we thought we had solved all the problems. We could create the new bacterial strains from the bacterial genomes cloned in yeast. We had this new cycle, we could work our way around the circle, we could add a centromere to the bacterial chromosome and turn it into a eukaryotic chromosome. The advantages, for those of you who work with bacteria, most bacteria do not have genetic systems, which is why most scientists don’t work with them. As soon as you put that bacterial genome in yeast, we have the complete repertoire of genetic tools available in yeast such as homologous recombination. We can make rapid changes in the genome, isolate the chromosome, methylate it if necessary, and do a transplantation to create a highly modified cell.

With all the new techniques and success we decided to synthesize the much larger M. mycoides genome. Dan Gibson led the effort that started with pieces that were 1,000 letters long. We put ten of those together to make these over 10,000 letters long. We put ten of those together to make pieces now that are 100,000 letters long, and we had eleven 100,000 base pair pieces. We put them in yeast, which assembled the genome. We knew how to transplant it out of yeast and we did the transplantation and it didn’t work.

Those of you who are software engineers know that software engineers have debugging software to tell them where the problems are in their code. So we had to develop the biological version of debugging software, which was basically substituting natural pieces of DNA for the synthetic ones so we could find out what was wrong. We found out we could have 10 of the 11 synthetic pieces, and the last piece had to be the native genome DNA to get a living cell. We re-sequenced our synthetic segment and found one letter wrong in an essential gene that made the difference between life and no life. The deletion was in the DNAa gene, which is an essential gene for life. We corrected that error, the one error out of 1.1 million, and we got the first actual synthetic cell from the genome transplants.

[Enlarge]

One of the ways that we knew that what we had was a synthetic cell was by watermarking the DNA so we could always tell our synthetic species from any naturally occurring one. Now about the watermarks, when we watermarked the first genome we just used the single letter amino acid code to write the authors names in the DNA. We were accused of not having much of an imagination. For this new genome we went a little bit farther by adding three quotations from the literature. But first the team developed a whole new code where by we could write the English language complete with numbers and punctuation in DNA code. It was quite interesting. We sent the paper to Science for a review, and one of the reviewers sent back their review written in DNA code, much to the frustration of the Science editor, who could not decipher it. (Laughter) But the reviewer’s DNA code was based on the ASCII code, and with biology that creates a problem because you can get long stretches of new without a stop codon. We developed this new code that puts in very frequent stop codons, because the last thing you want to do is put in a quote from James Joyce and have it turn into a new toxin that kills the cell or kills you. You didn’t know poetry could do that, I guess.

We built in the names of the 46 scientists that contributed to the effort, and also there was a message with an URL. So being the first species to have the computer as a parent, we thought it was appropriate it should have its own Web addressed built into the genome. As people solved this code, they would send an e-mail to the Web address written in the genome. Once numerous people solved it, we made this available.

The three quotes are the first one, and the probably most important one to this country is James Joyce, “To live, to err, to fall, to triumph, to recreate life out of life.” Somehow that seemed highly appropriate. The second is from Oppenheimer’s biography, “American Prometheus”. “See things not as they are, but as they might be.” In the third, from Richard Feynman, “What I cannot build, I cannot understand.”

Everybody thought this was very cool until a few months after this appeared, we got a letter from James Joyce’s estate attorney saying, “Did you seek permission to use this quotation?” In the US, at least, there are fair use laws that allow you to quote up to a paragraph without seeking permission. We sort of dismissed that one, and James Joyce was dead, and we didn’t know how to ask him anyway.

Then we started getting an e-mail trail from a Caltech scientist saying we misquoted Richard Feynman. But if you look on the Internet, this is the quotation that you find everywhere. We argued back this is what we found, and this is what was in his biography. So to prove his point, he sent a picture of Feynman’s blackboard with the original quotation, and it was, “What I cannot create, I do not understand.” I think it’s a much better quotation, and we’ve gone back to correct the DNA code so that Feynman can rest much more peacefully.

[Enlarge]

All living cells that we know of on this planet are DNA software driven biological machines comprised of hundreds to thousands of protein robots coded for by the DNA software. The protein robots carry out precise biochemical functions developed by billions of years of evolutionary software changes.

Science can go much further now, and there is an exciting paper out of Stanford with a team led by Markus Covert and that included John Glass from my institute using the work on the mycoplasma cell to do the first complete mathematical modeling of a cell. But this is coming out in Cell next week. It’s going to be an exciting paper. We can go from the digital code to the genetic code, and now modeling the entire function of the cell in a computer, going the complete digital circle. We are going even further now, by using computer software to design new DNA software to create a new synthetic life.

I hope it is becoming clear that all living cells that we know of on this planet are DNA software driven biological machines comprised of hundreds to thousands of protein robots coded for by the DNA software. The protein robots carry out precise biochemical functions developed by billions of years of evolutionary software changes. The software codes for the linear protein sequence, which in turn determines the rate of folding as well as the final 3-dimensional structure and function of the protein robot. The primary sequence determines the stability of the protein and therefore its dynamic regulation in the cell. By making a copy of the DNA software, cells have the ability to self-replicate. All these processes require energy. From all the genomes we have sequenced we have seen that there is a range of mechanisms for the generation of cellular energy molecules through a process we call metabolism. Some cells are able to transport sugars across the membrane into the cell and by some now well defined enzymatic processes capture the chemical energy in the sugar molecule and to supply it to the required cellular processes. Other cells such as the autotroph Methanococcus jannaschii use only inorganic chemicals to make every molecule in the cell while providing the cellular energy. These cells do this by a series of proteins that convert carbon dioxide into methane to generate cellular energy molecules and to provide the carbon to make proteins. These processes are all coded for in the genetic code.

Schrödinger citing the second law of thermodynamics (entropy principal)-the natural tendency of things to go over into disorder, described his notion of “order based on order”. We have now shown using synthetic DNA genomes that when you put new DNA software into the cell the protein robots coded for are produced, changing the cellular phenotype. When you change the DNA software you change the species. This is consistent with Schrodinger’s Code-script and “An organism’s astonishing gift of concentrating a ‘stream of order’ on itself …”

We can digitize life, and we generate life from the digital world. Just as the ribosome can convert the analogue message in mRNA into a protein robot, it’s becoming standard now in the world of science to convert digital code into protein viruses and cells. Scientists send digital code to each other instead of sending genes or proteins. There are several companies around the world that make their living by synthesizing genes for scientific labs. It’s faster and cheaper to synthesize a gene than it is to clone it, or even get it by Federal Express.

As an example BARDA in the US government sends us as a part of our synthetic genomic flu virus program with Novartis, an email with a test pandemic flu virus sequence. We convert the digital sequence into a flu virus genome in less than 12 hours. We are in the process of building a simple smaller faster converter device, “a digital to biological converter”, that in a fashion similar to the telephone where digital information is converted to sound; we can send digital DNA code at the close to the speed of light and convert the digital information into proteins, viruses and living cells. With a new flu pandemic we could digitally distribute a new vaccine in seconds around the world, perhaps even to each home in the future.

Currently all life is derived from other cellular life including our synthetic cell. This will change in the near future with the discovery of the right cocktail of enzymes, ribosomes, and chemicals including lipids together with the synthetic genome to create new cells and life forms without a prior cellular history. Look at the tremendous progress in the 70 years since Schrodinger’s lecture on this campus. Try to imagine 70 years from now in the year 2082 what will be happening. With the success of private space flight, the moon and Mars will be clearly colonized. New life forms for food or energy production or for new medicines will be sent as digital information to be converted back into life forms in the 4.3 to 21 minutes that it takes for a digital wave to go from earth to Mars.

I suggested in place of sending living humans to distant galaxies that we can send digital information together with the means to boot it up in tiny space vessels. More importantly and as I will speak to on Saturday evening synthetic life will enable us to understand all life on this planet and to enable new industries to produce food, energy, water and medicine as we add 1 billion new humans to earth every 12 years.

Schrodinger’s “What is Life?” helped to stimulate Jim Watson and Francis Crick to help kick off this new era of DNA science. One can only hope that the newest frontier of synthetic life will have a similar impact on the future.

REMARKS BY JAMES D. WATSON

JAMES WATSON: In 1963, which was 10 years after the Double Helix, I began putting together a book which became the Molecular Biology of the Gene. It was before we knew the complete code, it was after what Nirenberg had shown and so I thought we knew the general principles. I thought initially the title we would use for the book was This Is Life, but I thought that will be controversial because I hadn’t explained everything, so it just became The Molecular Biology of the Gene.