Classification of Microbiota –

An Overview of Clinical Microbiology, Classification, and Antimicrobial Resistance

Author and Curator: Larry H. Bernstein, MD, FCAP

Classification of Microbiota

Introduction to Overview of Microbiology

This is a contribution to a series of pieces on the history of biochemistry, molecular biology, physiology and medicine in the 20th century. Here I describe the common microbial organisms encountered in the clinical laboratory, the method of their collection, plating, culture and identification, and antibiotic sensitivity testing and resistant strains.

I may begin with the recognition that there are common strains in the environment that are not pathogenic, and there are pathogenic bacteria.

In addition, there are bacteria that coexist in the body habitat under specific conditions so that we are able to map the types expected to location, such as, skin, mouth and nasal cavities, the colon, the vagina and urinary system. Meningitides occur as a result of extension from the nasal cavity to the brain. When bacteria invade the circulation, it is referred to as septicemia, and the bacteria can cause valvular heart damage.

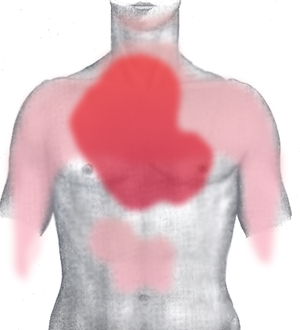

Bacteriology can be traced to origins in the 19th century. The clinical features of localized infection are classically referred to as redness, heat, a raised lesion (pustule), and exudate (serous or purulent – watery or cellular). This not only holds for a focal lesion (as skin), but also for pneumonia, urinary infection, and genital. It may be accompanied by cough, or bloody cough and wheezing, or by an unclear urine. In the case of septicemia, there is fever, and there may be seizures or delirium.

Collection and handling of specimens

Specimens are collected by sterile technique by a nurse or physician and sent to a lab as a swab, or as a blood specimen. In the case of a febrile illness, blood cultures may be obtained from opposite arms, and another an hour later. This is related to the possible cyclical seeding of bacteria into the circulation. If the specimen is collected from a site of infection, a swab may be put onto a glass slide for gram staining. The specimen collected is sent to the laboratory.

We may consider syphilis and tuberculosis special cases that I’ll set aside. I shall not go into virology either, although I may referred to smallpox, influenza, polio, HIV under epidemic. The first step in identification is the Gram stain, developed in the 19th century. Organisms of the skin are Gram positive and appear blue on staining. They are cocci, or circular, organized in characteristic clusters (staphylococcus, streptococcus) or in pairs (diplococci, eg. Pneumococcus), and if from the intestine (enterococcus). If they are elongated rods, they might be coliform. If they stain red, they are Gram negative. Gram negative rods are coliform, and are enterobacteriaceae. Meningococci are Gram negative cocci. So we have certain information about these organisms before we plate them for growth.

Laboratory growth characteristics

The specimen is applied to an agar plate with a metal rod applicator, or perhaps onto more than one agar plate. The agar plate contains a growth media or a growth inhibitor that is more favorable to certain species than to others. The bacteria are grown at 37o C in an incubator and colonies develop that are white or nonwhite, and they are smooth or wrinkled. The appearance of the colonies is characteristic for certain strains. If there is no contamination, all of the colonies look the same. The next step is to:

- Gram stain from a colony

- Transfer samples from the colony to a series of growth media that identify presence or absence of specific nutrient requirements for growth (which is presumed from the prior findings).

In addition, the colony samples are grown on an agar to which is applied antibiotic tabs. The tabs either allow or repress growth. It wa some 50 years ago that the infectious disease physician and microbiologist Abraham Braude would culture the bacteria on agar plates that had a gradient of antibiotic to check for concentration that would inhibit growth.

Principles of Diagnosis (Extracts)

By John A. Washington

The clinical presentation of an infectious disease reflects the interaction between the host and the microorganism. This interaction is affected by the host immune status and microbial virulence factors. Signs and symptoms vary according to the site and severity of infection. Diagnosis requires a composite of information, including history, physical examination, radiographic findings, and laboratory data.

Microbiologic Examination

Direct Examination and Techniques: Direct examination of specimens reveals gross pathology. Microscopy may identify microorganisms. Immunofluorescence, immuno-peroxidase staining, and other immunoassays may detect specific microbial antigens. Genetic probes identify genus- or species-specific DNA or RNA sequences.

Culture: Isolation of infectious agents frequently requires specialized media. Nonselective (noninhibitory) media permit the growth of many microorganisms. Selective media contain inhibitory substances that permit the isolation of specific types of microorganisms.

Microbial Identification: Colony and cellular morphology may permit preliminary identification. Growth characteristics under various conditions, utilization of carbohydrates and other substrates, enzymatic activity, immunoassays, and genetic probes are also used.

Serodiagnosis: A high or rising titer of specific IgG antibodies or the presence of specific IgM antibodies may suggest or confirm a diagnosis.

Antimicrobial Susceptibility: Microorganisms, particularly bacteria, are tested in vitro to determine whether they are susceptible to antimicrobial agents.

Diagnostic medical microbiology is the discipline that identifies etiologic agents of disease. The job of the clinical microbiology laboratory is to test specimens from patients for microorganisms that are, or may be, a cause of the illness and to provide information (when appropriate) about the in vitro activity of antimicrobial drugs against the microorganisms identified (Fig. 1).

Laboratory procedures used in confirming a clinical diagnosis of infectious disease with a bacterial etiology

http://www.ncbi.nlm.nih.gov/books/NBK8014/bin/ch10f1.jpg

A variety of microscopic, immunologic, and hybridization techniques have been developed for rapid diagnosis

From: Chapter 10, Principles of Diagnosis

Medical Microbiology. 4th edition.

Baron S, editor.

Galveston (TX): University of Texas Medical Branch at Galveston; 1996.

For immunologic detection of microbial antigens, latex particle agglutination, coagglutination, and enzyme-linked immunosorbent assay (ELISA) are the most frequently used techniques in the clinical laboratory. Antibody to a specific antigen is bound to latex particles or to a heat-killed and treated protein A-rich strain of Staphylococcus aureus to produce agglutination (Fig. 10-2). There are several approaches to ELISA; the one most frequently used for the detection of microbial antigens uses an antigen-specific antibody that is fixed to a solid phase, which may be a latex or metal bead or the inside surface of a well in a plastic tray. Antigen present in the specimen binds to the antibody as inFig. 10-2. The test is then completed by adding a second antigen-specific antibody bound to an enzyme that can react with a substrate to produce a colored product. The initial antigen antibody complex forms in a manner similar to that shown inFigure 10-2. When the enzyme-conjugated antibody is added, it binds to previously unbound antigenic sites, and the antigen is, in effect, sandwiched between the solid phase and the enzyme-conjugated antibody. The reaction is completed by adding the enzyme substrate.

Figure 2 Agglutination test in which inert particles (latex beads or heat-killed S aureus Cowan 1 strain with protein A) are coated with antibody to any of a variety of antigens and then used to detect the antigen in specimens or in isolated bacteria

http://www.ncbi.nlm.nih.gov/books/NBK8014/bin/ch10f2.jpg

Genetic probes are based on the detection of unique nucleotide sequences with the DNA or RNA of a microorganism. Once such a unique nucleotide sequence, which may represent a portion of a virulence gene or of chromosomal DNA, is found, it is isolated and inserted into a cloning vector (plasmid), which is then transformed into Escherichia coli to produce multiple copies of the probe. The sequence is then reisolated from plasmids and labeled with an isotope or substrate for diagnostic use. Hybridization of the sequence with a complementary sequence of DNA or RNA follows cleavage of the double-stranded DNA of the microorganism in the specimen.

The use of molecular technology in the diagnoses of infectious diseases has been further enhanced by the introduction of gene amplication techniques, such as the polymerase chain reaction (PCR) in which DNA polymerase is able to copy a strand of DNA by elongating complementary strands of DNA that have been initiated from a pair of closely spaced oligonucleotide primers. This approach has had major applications in the detection of infections due to microorganisms that are difficult to culture (e.g. the human immunodeficiency virus) or that have not as yet been successfully cultured (e.g. the Whipple’s disease bacillus).

Solid media, although somewhat less sensitive than liquid media, provide isolated colonies that can be quantified if necessary and identified. Some genera and species can be recognized on the basis of their colony morphologies.

In some instances one can take advantage of differential carbohydrate fermentation capabilities of microorganisms by incorporating one or more carbohydrates in the medium along with a suitable pH indicator. Such media are called differential media (e.g., eosin methylene blue or MacConkey agar) and are commonly used to isolate enteric bacilli. Different genera of the Enterobacteriaceae can then be presumptively identified by the color as well as the morphology of colonies.

Culture media can also be made selective by incorporating compounds such as antimicrobial agents that inhibit the indigenous flora while permitting growth of specific microorganisms resistant to these inhibitors. One such example is Thayer-Martin medium, which is used to isolate Neisseria gonorrhoeae. This medium contains vancomycin to inhibit Gram-positive bacteria, colistin to inhibit most Gram-negative bacilli, trimethoprim-sulfamethoxazole to inhibit Proteus species and other species that are not inhibited by colistin and anisomycin to inhibit fungi. The pathogenic Neisseria species, N gonorrhoeae and N meningitidis, are ordinarily resistant to the concentrations of these antimicrobial agents in the medium.

Infection of the bladder (cystitis) or kidney (pyelone-phritis) is usually accompanied by bacteriuria of about ≥ 104 CFU/ml. For this reason, quantitative cultures (Fig. 10-3) of urine must always be performed. For most other specimens a semiquantitative streak method (Fig. 10-3) over the agar surface is sufficient. For quantitative cultures, a specific volume of specimen is spread over the agar surface and the number of colonies per milliliter is estimated.

Identification of bacteria (including mycobacteria) is based on growth characteristics (such as the time required for growth to appear or the atmosphere in which growth occurs), colony and microscopic morphology, and biochemical, physiologic, and, in some instances, antigenic or nucleotide sequence characteristics. The selection and number of tests for bacterial identification depend upon the category of bacteria present (aerobic versus anaerobic, Gram-positive versus Gram-negative, cocci versus bacilli) and the expertise of the microbiologist examining the culture. Gram-positive cocci that grow in air with or without added CO2 may be identified by a relatively small number of tests. The identification of most Gram-negative bacilli is far more complex and often requires panels of 20 tests for determining biochemical and physiologic characteristics.

Antimicrobial susceptibility tests are performed by either disk diffusion or a dilution method. In the former, a standardized suspension of a particular microorganism is inoculated onto an agar surface to which paper disks containing various antimicrobial agents are applied. Following overnight incubation, any zone diameters of inhibition about the disks are measured. An alternative method is to dilute on a log2 scale each antimicrobial agent in broth to provide a range of concentrations and to inoculate each tube or, if a microplate is used, each well containing the antimicrobial agent in broth with a standardized suspension of the microorganism to be tested. The lowest concentration of antimicrobial agent that inhibits the growth of the microorganism is the minimal inhibitory concentration.

Classification Principles

This Week’s Citation Classic®_______ Sneath P H A & Sokal R R.

Numerical taxonomy: the principles and practice of

numerical classification. San Francisco: Freeman, 1973. 573 p.

[Medical Research Council Microbial Systematics Unit, Univ. Leicester, England

and Dept. Ecology and Evolution, State Univ. New York, Stony Brook, NY]

Numerical taxonomy establishes classification

of organisms based on their similarities. It utilizes

many equally weighted characters and employs

clustering and similar algorithms to yield

objective groupings. It can beextended to give

phylogenetic or diagnostic systems and can be

applied to many other fields of endeavour.

Mathematical Foundations of Computer Science 1998

Lecture Notes in Computer Science Volume 1450, 1998, pp 474-482

Date: 28 May 2006

Positive Turing and truth-table completeness for NEXP are incomparable 1998

Levke Bentzien

The truth-table method [matrix method] is one of the decision procedures for sentence logic (q.v., §3.2). The method is based on the fact that the truth value of a compound formula of sentence logic, construed as a truth-function, is determined by the truth values of its arguments (cf. “Sentence logic” §2.2). To decide whether a formula A is a tautology or not, we list all possible combinations of truth values to the variables in A: A is a tautology if it takes the value truth under each assignment.

Using ideas introduced by Buhrman et al. ([2], [3]) to separate various completeness notions for NEXP = NTIME (2poly), positive Turing complete sets for NEXP are studied. In contrast to many-one completeness and bounded truth-table completeness with norm 1 which are known to coincide on NEXP ([3]), whence any such set for NEXP is positive Turing complete, we give sets A and B such that

A is ≤ bT(2) P -complete but not ≤ posT P -complete for NEXP

B is ≤ posT P -complete but not ≤ tt P -complete for NEXP. These results come close to optimality since a further strengthening of (1), as was done by Buhrman in [1] for EXP = DTIME(2poly), seems to require the assumption NEXP = co-NEXP.

Computability and Models

The University Series in Mathematics 2003, pp 1-10

Truth-Table Complete Computably Enumerable Sets

Marat M. Arslanov

We prove a truth-table completeness criterion for computably enumerable sets.

The authors research was partially supported by Russian Foundation of Basic Research, Project 99-01-00830, and RFBR-INTAS, Project 97-91-71991.

TRUTH TABLE CLASSIFICATION AND IDENTIFICATION*

EUGENE W. RYPKA

Department of Microbiology, Lovelace Foundation for Medical Education and Research,

Albuquerque, N.M. 87108, U.S.A.

Space life sciences 1971-12-1; 3(2): pp 135-156

http://dx.doi.org:/10.1007/BF00927988

(Received 15 July, 1971)

Abstract. A logical basis for classification is that elements grouped together and higher categories of elements should have a high degree of similarity with the provision that all groups and categories be disjoint to some degree. A methodology has been developed for constructing classifications automatically that gives

nearly instantaneous correlations of character patterns of organisms with time and clusters with apparent similarity. This means that automatic numerical identification will always construct schemes from which disjoint answers can be obtained if test sensitivities for characters are correct. Unidentified organisms are recycled through continuous classification with reconstruction of identification schemes. This process is

cyclic and self-correcting. The method also accumulates and analyzes data which updates and presents a more accurate biological picture.

Syndromic classification: A process for amplifying information using S-clustering

Eugene W. Rypka, PHD

http://dx.doi.org:/10.1016/S0899-9007(96)00315-2

Optimal classification/Rypka < Optimal classification>

Contents

1 Rypka’s Method

1.1 Equations

1.2 Examples

2 Notes and References

Rypka’s Method

Rypka’s[1] method[2] utilizes the theoretical and empirical separatory equations shown below to perform the task of optimal classification. The method finds the optimal order of the fewest attributes, which in combination define a bounded class of elements.

Application of the method begins with construction of an attribute-valued system in truth table[3] or spreadsheet form with elements listed in the left most column beginning in the second row. Characteristics[4] are listed in the first row beginning in the second column with the code name of the data in the upper left most cell. The values which connect each characteristic with each element are placed in the intersecting cells. Selecting appropriate characteristics to universally define the class of elements may be the most difficult part for the classifier of utilizing this method.

The elements are first sorted in descending order according to their truth table value, which is calculated from the existing sequence and value of characteristics for each element. Duplicate truth table values or multisets for the entire bounded class reveal either the need to eliminate duplicate elements or the need to include additional characteristics.

An empirical separatory value is calculated for each characteristic in the set and the characteristic with the greatest empirical separatory value is exchanged with the characteristic which occupies the most significant attribute position.

Next the second most significant characteristic is found by calculating an empirical separatory value for each remaining characteristic in combination with the first characteristic. The characteristic which produces the greatest separatory value is then exchanged with the characteristic which occupies the second most significant attribute position.

Next the third most significant characteristic is found by calculating an empirical separatory value for each remaining characteristic in combination with the first and second characteristics. The characteristic which produces the greatest empirical separatory value is then exchanged with the characteristic which occupies the third most significant attribute position. This procedure may continue until all characteristics have been processed or until one hundred percent separation of the elements has been achieved.

A larger radix will allow faster identification by excluding a greater percentage of elements per characteristic. A binary radix for instance excludes only fifty percent of the elements per characteristic whereas a five-valued radix excludes eighty percent of the elements per characteristic.[5] What follows is an elucidation of the matrix and separatory equations.[6]

Computational Example

Bounded Class Data

Bounded Class Dimensions

G = 28 – 28 elements – i = 0…G-1[1]

C = 10 – 10 characteristics or attributes – j = 0…C-1

V = 5 – 5 valued logic – l = 0…V-1

Order of Elements

Count multisets

Squared multiset Counts

Separatory Values

max(T) = 309 = S8 = highest initial separatory value

Notes

Mathcad’s ORIGIN function applies to all arrays such that if more than one array is being used and one array requires a zero origin then the other arrays must use a zero origin with all variables being adapted as well.

Rypka’s Method Edit

Rypka’s[1] method[2] utilizes the theoretical and empirical separatory equations shown below to perform the task of optimal classification. The method finds the optimal order of the fewest attributes, which in combination define a bounded class of elements.

Application of the method begins with construction of an attribute-valued system in truth table[3] or spreadsheet form with elements listed in the left most column beginning in the second row. Characteristics[4] are listed in the first row beginning in the second column with the title of the attributes in the upper left most cell. Normally the file name of the data is given the title of the element class. The values which connect each characteristic with each element are placed in the intersecting cells. Selecting characteristics which all elements share may be the most difficult part of creating a database which can utilizing this method.

The elements are first sorted in descending order according to their truth table value, which is calculated from the existing sequence and value of characteristics for each element. Duplicate truth table values or multisets for the entire bounded class reveal either the need to eliminate duplicate elements or the need to include additional characteristics.

An empirical separatory value is calculated for each characteristic in the set and the characteristic with the greatest empirical separatory value is exchanged with the characteristic which occupies the most significant attribute position.

Next the second most significant characteristic is found by calculating an empirical separatory value for each remaining characteristic in combination with the first characteristic. The characteristic which produces the greatest separatory value is then exchanged with the characteristic which occupies the second most significant attribute position.

Next the third most significant characteristic is found by calculating an empirical separatory value for each remaining characteristic in combination with the first and second characteristics. The characteristic which produces the greatest empirical separatory value is then exchanged with the characteristic which occupies the third most significant attribute position. This procedure may continue until all characteristics have been processed or until one hundred percent separation of the elements has been achieved.

A larger radix will allow faster identification by excluding a greater percentage of elements per characteristic. A binary radix for instance excludes only fifty percent of the elements per characteristic whereas a five-valued radix excludes eighty percent of the elements per characteristic.[5] What follows is an elucidation of the matrix and separatory equations.[6]

Syndromic Classification: A Process for Amplifying Information Using S-Clustering

Eugene W. Rypka, PhD

University of New Mexico, Albuquerque, New Mexico, USA

Statistics Editor: Marcello Pagano, PhD

Harvard School of Public Health, Boston, Massachusetts, USA

Nutrition 1996; 12(11/12): 827-829

In a previous issue of Nutrition, Drs. Bernstein and Pleban’ use the method of S-clustering to aid in nutritional classification of patients directly on-line. Classification of this type is called primary or syndromic classification.* It is created by a process called separatory (S-) clustering (E. Rypka, unpublished observations). The authors use S-clustering in Table I. S-clustering extracts features (analytes, variables) from endogenous data that amplify or maximize structural information to create classes of patients (pathophysiologic events) which are the most disjointed or separable. S-clustering differs from other classificatory methods because it finds in a database a theoretic- or more- number of variables with the required variety that map closest to an ideal, theoretic, or structural information standard. In Table I of their article, Bernstein and Pleban’ indicate there would have to be 3 ’ = 243 rows to show all possible patterns. In Table II of this article, I have used a 33 = 27 row truth table to convey the notion of mapping amplified information to an ideal, theoretic standard using just the first three columns. Variables are scaled for use in S-clustering.

A Survey of Binary Similarity and Distance Measures

Seung-Seok Choi, Sung-Hyuk Cha, Charles C. Tappert

SYSTEMICS, CYBERNETICS AND INFORMATICS 2010; 8(1): 43-48

The binary feature vector is one of the most common

representations of patterns and measuring similarity and

distance measures play a critical role in many problems

such as clustering, classification, etc. Ever since Jaccard

proposed a similarity measure to classify ecological

species in 1901, numerous binary similarity and distance

measures have been proposed in various fields. Applying

appropriate measures results in more accurate data

analysis. Notwithstanding, few comprehensive surveys

on binary measures have been conducted. Hence we

collected 76 binary similarity and distance measures used

over the last century and reveal their correlations through

the hierarchical clustering technique.

This paper is organized as follows. Section 2 describes

the definitions of 76 binary similarity and dissimilarity

measures. Section 3 discusses the grouping of those

measures using hierarchical clustering. Section 4

concludes this work.

Historically, all the binary measures observed above have

had a meaningful performance in their respective fields.

The binary similarity coefficients proposed by Peirce,

Yule, and Pearson in 1900s contributes to the evolution

of the various correlation based binary similarity

measures. The Jaccard coefficient proposed at 1901 is

still widely used in the various fields such as ecology and

biology. The discussion of inclusion or exclusion of

negative matches was actively arisen by Sokal & Sneath

in during 1960s and by Goodman & Kruskal in 1970s.

Polyphasic Taxonomy of the Genus Vibrio: Numerical Taxonomy of Vibrio cholerae, Vibrio

parahaemolyticus, and Related Vibrio Species

R. R. COLWELL

JOURNAL OF BACTERIOLOGY, Oct. 1970; 104(1): 410-433

A set of 86 bacterial cultures, including 30 strains of Vibrio cholerae, 35 strains of

V. parahaemolyticus, and 21 representative strains of Pseudomonas, Spirillum,

Achromobacter, Arthrobacter, and marine Vibrio species were tested for a total of 200

characteristics. Morphological, physiological, and biochemical characteristics were

included in the analysis. Overall deoxyribonucleic acid (DNA) base compositions

and ultrastructure, under the electron microscope, were also examined. The taxonomic

data were analyzed by computer by using numerical taxonomy programs

designed to sort and cluster strains related phenetically. The V. cholerae strains

formed an homogeneous cluster, sharing overall S values of >75%. Two strains,

V. cholerae NCTC 30 and NCTC 8042, did not fall into the V. cholerae species

group when tested by the hypothetical median organism calculation. No separation

of “classic” V. cholerae, El Tor vibrios, and nonagglutinable vibrios was observed.

These all fell into a single, relatively homogeneous, V. cholerae species cluster.

PJ. parahaemolyticus strains, excepting 5144, 5146, and 5162, designated members

of the species V. alginolyticus, clustered at S >80%. Characteristics uniformly

present in all the Vibrio species examined are given, as are also characteristics and

frequency of occurrence for V. cholerae and V. parahaemolyticus. The clusters formed

in the numerical taxonomy analyses revealed similar overall DNA base compositions,

with the range for the Vibrio species of 40 to 48% guanine plus cytosine. Generic

level of relationship of V. cholerae and V. parahaemolyticus is considered

dubious. Intra- and intergroup relationships obtained from the numerical taxonomy

studies showed highly significant correlation with DNA/DNA reassociation data.

A Numerical Classification of the Genus Bacillus

By FERGUS G . PRIEST, MICHAEL GOODFELLOW AND CAROLE TODD

Journal of General Microbiology (1988), 134, 1847-1882.

Three hundred and sixty-eight strains of aerobic, endospore-forming bacteria which included type and reference cultures of Bacillus and environmental isolates were studied. Overall similarities of these strains for 118 unit characters were determined by the SSMS,, and Dp coefficients and clustering achieved using the UPGMA algorithm. Test error was within acceptable limits. Six cluster-groups were defined at 70% SSM which corresponded to 69% Sp and 48-57% SJ.G roupings obtained with the three coefficients were generally similar but there were some changes in the definition and membership of cluster-groups and clusters, particularly with the SJ coefficient. The Bacillus strains were distributed among 31 major (4 or more strains), 18 minor (2 or 3 strains) and 30 single-member clusters at the 83% SsMle vel. Most of these clusters can be regarded as taxospecies. The heterogeneity of several species, including Bacillus breuis, B. circulans, B. coagulans, B. megateriun, B . sphaericus and B . stearothermophilus, has been indicated and the species status of several taxa of hitherto uncertain validity confirmed. Thus on the basis of the numerical phenetic and appropriate (published) molecular genetic data, it is proposed

that the following names be recognized; BacillusJlexus (Batchelor) nom. rev., Bacillus fusiformis (Smith et al.) comb. nov., Bacillus kaustophilus (Prickett) nom. rev., Bacilluspsychrosaccharolyticus (Larkin & Stokes) nom. rev. and Bacillus simplex (Gottheil) nom. rev. Other phenetically well-defined taxospecies included ‘ B. aneurinolyticus’, ‘B. apiarius’, ‘B. cascainensis’, ‘B. thiaminolyticus’ and three clusters of environmental isolates related to B . firmus and previously described as ‘B. firmus-B. lentus intermediates’. Future developments in the light of the numerical phenetic data are discussed.

Numerical Classification of Bacteria

Part II. * Computer Analysis of Coryneform Bacteria (2)

Comparison of Group-Formations Obtained on Two

Different Methods of Scoring Data

By Eitaro MASUOan d Toshio NAKAGAWA

[Agr. Biol. Chem., 1969; 33(8): 1124-1133.

Sixty three organisms selected from 12 genera of bacteria were subjected to numerical analysis. The purpose of this work is to examine the relationships among 38 coryneform bacteria included in the test organisms by two coding methods-Sneath’s and Lockhart’s systems-, and to compare the results with conventional classification. In both cases of codification, five groups and one or two single item(s) were found in the resultant classifications. Different codings brought, however, a few distinct differences in some groups , especially in a group of sporogenic bacilli or lactic-acid bacteria. So far as the present work concerns, the result obtained on Lockhart’s coding rather than that obtained on Sneath’s coding resembled the conventional classification. The taxonomic positions of corynebacteria were quite different from those of the conventional classification, regardless

of which coding method was applied.

Though animal corynebacteria have conventionally been considered to occupy the

taxonomic position neighboring to genera Arthrobacter and Cellulornonas and regarded to be the nucleus of so-called “coryneform bacteria,’ the present work showed that many of the corynebacteria are akin to certain mycobacteria rather than to the organisms belonging to the above two genera.

Numerical Classification of Bacteria

Part III. Computer Analysis of “Coryneform Bacteria” (3)

Classification Based on DNA Base Compositions

By EitaroM ASUaOnd ToshioN AKAGAWA

Agr. Biol. Chem., 1969; 33(11): 1570-1576

It has been known that the base compositions of deoxyribonucleic acids (DNA) are

quite different from organism to organism. A pertinent example of this diversity is

found in bacterial species. The base compositions of DNA isolated from a wide variety

of bacteria are distributed in a range from 25 to 75 GC mole-percent (100x(G+C)/

(A+T+G+C)).1) The usefulness of the information of DNA base composition for

the taxonomy of bacteria has been emphasized by several authors. Lee et al.,” Sueoka,” and Freese) have speculated on the evolutionary significance of microbial DNA base composition. They pointed out that closely related microorganisms generally showed similar base compositions of DNA, and suggested that phylogenetic relationship should be reflected in the GC content.

In the present paper are compared the results of numerical classifications of 45

bacteria based on the two different similarity matrices: One representing the overall

similarities of phenotypic properties, the other representing the similarities of GC contents.

Advanced computational algorithms for microbial community analysis using massive 16S rRNA

sequence data

Y Sun, Y Cai, V Mai, W Farmerie, F Yu, J Li and S Goodison

Nucleic Acids Research, 2010; 38(22): e205

http://dx.doi.org:/10.1093/nar/gkq872

With the aid of next-generation sequencing technology, researchers can now obtain millions of microbial signature sequences for diverse applications ranging from human epidemiological studies to global ocean surveys. The development of advanced computational strategies to maximally extract pertinent information from massive nucleotide data has become a major focus of the bioinformatics community. Here, we describe a novel analytical strategy including discriminant and topology analyses that enables researchers to deeply investigate the hidden world of microbial communities, far beyond basic microbial diversity estimation. We demonstrate the utility of our

approach through a computational study performed on a previously published massive human gut 16S rRNA data set. The application of discriminant and

topology analyses enabled us to derive quantitative disease-associated microbial signatures and describe microbial community structure in far more detail than previously achievable. Our approach provides rigorous statistical tools for sequence based studies aimed at elucidating associations between known or unknown organisms and a variety of physiological or environmental conditions.

What is Drug Resistance?

Antimicrobial resistance is the ability of microbes, such as bacteria, viruses, parasites, or fungi, to grow in the presence of a chemical (drug) that would normally kill it or limit its growth.

Diagram showing the difference between non-resistant bacteria and drug resistant bacteria.

Credit: NIAID

Diagram showing the difference between non-resistant bacteria and drug resistant bacteria. Non-resistant bacteria multiply, and upon drug treatment, the bacteria die. Drug resistant bacteria multiply as well, but upon drug treatment, the bacteria continue to spread.

Between 5 and 10 percent of all hospital patients develop an infection. About 90,000 of these patients die each year as a result of their infection, up from 13,300 patient deaths in 1992.

According to the Centers for Disease Control and Prevention (April 2011), antibiotic resistance in the United States costs an estimated $20 billion a year in excess health care costs, $35 million in other societal costs and more than 8 million additional days that people spend in the hospital.

Resistance to Antibiotics: Are We in the Post-Antibiotic Era?

Alfonso J. Alanis

Archives of Medical Research 36 (2005) 697–705

http://dx.doi.org:/10.1016/j.arcmed.2005.06.009

Serious infections caused by bacteria that have become resistant to commonly used antibiotics have become a major global healthcare problem in the 21st century. They not only are more severe and require longer and more complex treatments, but they are also significantly more expensive to diagnose and to treat. Antibiotic resistance, initially a problem of the hospital setting associated with an increased number of hospital acquired infections usually in critically ill and immunosuppressed patients, has now extended into the community causing severe infections difficult to diagnose and treat. The molecular mechanisms by which bacteria have become resistant to antibiotics are diverse and complex. Bacteria have developed resistance to all different classes of antibiotics discovered to date. The most frequent type of resistance is acquired and transmitted horizontally via the conjugation of a plasmid. In recent times new mechanisms of resistance have resulted in the simultaneous development of resistance to several antibiotic classes creating very dangerous multidrug-resistant (MDR) bacterial strains, some also known as ‘‘superbugs’’. The indiscriminate and inappropriate use of antibiotics in outpatient clinics, hospitalized patients and in the food industry is the single largest factor leading to antibiotic resistance. The pharmaceutical industry, large academic institutions or the government are not investing the necessary resources to produce the next generation of newer safe and effective antimicrobial drugs. In many cases, large pharmaceutical companies have terminated their anti-infective research programs altogether due to economic reasons. The potential negative consequences of all these events are relevant because they put society at risk for the spread of potentially serious MDR bacterial infections.

Targeting the Human Macrophage with Combinations of Drugs and Inhibitors of Ca2+ and K+ Transport to Enhance the Killing of Intracellular Multi-Drug Resistant M. tuberculosis (MDR-TB) – a Novel, Patentable Approach to Limit the Emergence of XDR-TB

Marta Martins

Recent Patents on Anti-Infective Drug Discovery, 2011, 6, 000-000

The emergence of resistance in Tuberculosis has become a serious problem for the control of this disease. For that reason, new therapeutic strategies that can be implemented in the clinical setting are urgently needed. The design of new compounds active against mycobacteria must take into account that Tuberculosis is mainly an intracellular infection of the alveolar macrophage and therefore must maintain activity within the host cells. An alternative therapeutic approach will be described in this review, focusing on the activation of the phagocytic cell and the subsequent killing of the internalized bacteria. This approach explores the combined use of antibiotics and phenothiazines, or Ca2+ and K+ flux inhibitors, in the infected macrophage. Targeting the infected macrophage and not the internalized bacteria could overcome the problem of bacterial multi-drug resistance. This will potentially eliminate the appearance of new multi-drug resistant tuberculosis (MDR-TB) cases and subsequently prevent the emergence of extensively-drug resistant tuberculosis (XDR-TB). Patents resulting from this novel and innovative approach could be extremely valuable if they can be implemented in the clinical setting. Other patents will also be discussed such as the treatment of TB using immunomodulator compounds (for example: betaglycans).

Six Epigenetic Faces of Streptococcus

Kevin Mayer

http://www.genengnews.com/gen-news-highlights/six-epigenetic-faces-of-streptococcus/81250430/

Medical illustration of Streptococcus pneumonia. [CDC]

It appears that S. pneumoniae has even more personalities, each associated with a different proclivity toward invasive, life-threatening disease. In fact, any of six personalities may emerge depending on the action of a single genetic switch.

To uncover the switch, an international team of scientists conducted a study in genomics, but they looked beyond nucleotide polymorphisms or accessory regions as possible phenotype-shifting mechanisms. Instead, they focused on the potential of restriction-modification (RM) systems to mediate gene regulation via epigenetic changes.

Scientists representing the University of Leicester, Griffith University’s Institute for Glycomics, theUniversity of Adelaide, and Pacific Biosciences realized that the S. pneumoniae genome contains two Type I, three Type II, and one Type IV RM systems. Of these, only the DpnI Type II RM system had been described in detail. Switchable Type I systems had been described previously, but these reports did not provide evidence for differential methylation or for phenotypic impact.

As it turned out, the Type I system embodied a mechanism capable of randomly changing the bacterium’s characteristics into six alternative states. The mechanism’s details were presented September 30 in Nature Communications, in an article entitled, “A random six-phase switch regulates pneumococcal virulence via global epigenetic changes.”

“The underlying mechanism for such phase variation consists of genetic rearrangements in a Type I restriction-modification system (SpnD39III),” wrote the authors. “The rearrangements generate six alternative specificities with distinct methylation patterns, as defined by single-molecule, real-time (SMRT) methylomics.”

Eradication of multidrug-resistant A. baumanniii in burn wounds by antiseptic pulsed electric field.

A Golberg, GF Broelsch, D Vecchio,S Khan, MR Hamblin, WG Austen, Jr, RL Sheridan, ML Yarmush.

Emerging bacterial resistance to multiple drugs is an increasing problem in burn wound management. New non-pharmacologic interventions are needed for wound disinfection. Here we report on a novel physical method for disinfection: antiseptic pulsed electric field (PEF) applied externally to the infected wounds. In an animal model, we show that PEF can reduce the load of multidrug resistant Acinetobacter baumannii present in a full thickness burn wound by more than four orders of magnitude, as detected by bioluminescence imaging. Furthermore, using a finite element numerical model, we demonstrate that PEF provides non-thermal, homogeneous, full thickness treatment for the burn wound, thus, overcoming the limitation of treatment depth for many topical antimicrobials. These modeling tools and our in vivo results will be extremely useful for further translation of the PEF technology to the clinical setting. We believe that PEF, in combination with systemic antibiotics, will synergistically eradicate multidrug-resistant burn wound infections, prevent biofilm formation and restore natural skin microbiome. PEF provides a new platform for infection combat in patients, therefore it has a potential to significantly decreasing morbidity and mortality.

Golberg, A. & Yarmush, M. L. Nonthermal irreversible electroporation: fundamentals, applications, and challenges. IEEE Trans Biomed Eng 60, 707-14 (2013).

Mechanisms Of Antibiotic Resistance In Salmonella: Efflux Pumps, Genetics, Quorum Sensing And Biofilm Formation.

Martins M, McCusker M, Amaral L, Fanning S

Perspectives in Drug Discovery and Design 02/2011; 8:114-123.

In Salmonella the main mechanisms of antibiotic resistance are mutations in target genes (such as DNA gyrase and topoisomerase IV) and the over-expression of efflux pumps. However, other mechanisms such as changes in the cell envelope; down regulation of membrane porins; increased lipopolysaccharide (LPS) component of the outer cell membrane; quorum sensing and biofilm formation can also contribute to the resistance seen in this microorganism. To overcome this problem new therapeutic approaches are urgently needed. In the case of efflux-mediated multidrug resistant isolates, one of the treatment options could be the use of efflux pump inhibitors (EPIs) in combination with the antibiotics to which the bacteria is resistant. By blocking the efflux pumps resistance is partly or wholly reversed, allowing antibiotics showing no activity against the MDR strains to be used to treat these infections. Compounds that show potential as an EPI are therefore of interest, as well as new strategies to target the efflux systems. Quorum sensing (QS) and biofilm formation are systems also known to be involved in antibiotic resistance. Consequently, compounds that can disrupt or inhibit these bacterial “communication systems” will be of use in the treatment of these infections.

Role of Phenothiazines and Structurally Similar Compounds of Plant Origin in the Fight against Infections by Drug Resistant Bacteria

SG Dastidar, JE Kristiansen, J Molnar and L Amaral

Antibiotics 2013, 2, 58-71;

http://dx.doi.org:/10.3390/antibiotics2010058

Phenothiazines have their primary effects on the plasma membranes of prokaryotes and eukaryotes. Among the components of the prokaryotic plasma membrane affected are efflux pumps, their energy sources and energy providing enzymes, such as ATPase, and genes that regulate and code for the permeability aspect of a bacterium. The response of multidrug and extensively drug resistant tuberculosis to phenothiazines shows an alternative therapy for its treatment. Many phenothiazines have shown synergistic activity with several antibiotics thereby lowering the doses of antibiotics administered for specific bacterial infections. Trimeprazine is synergistic with trimethoprim. Flupenthixol (Fp) has been found to be synergistic with penicillin and chlorpromazine (CPZ); in addition, some antibiotics are also synergistic. Along with the antibacterial action described in this review, many phenothiazines possess plasmid curing activities, which render the bacterial carrier of the plasmid sensitive to antibiotics. Thus, simultaneous applications of a phenothiazine like TZ would not only act as an additional antibacterial agent but also would help to eliminate drug resistant plasmid from the infectious bacterial cells.

Multidrug Efflux Pumps Described for Staphylococcus aureus

| Efflux Pump | Familya | Regulator(s)b | Substrate Specificity | References |

| Chromosomally-encoded Efflux Systems | ||||

| NorA | MFS | MgrA, NorG(?) | Hydrophilic fluoroquinolones (ciprofloxacin, norfloxacin)QACs (tetraphenylphosphonium, benzalkonium chloride)

Dyes (e.g. ethidium bromide, rhodamine) |

[16,18,19] |

| NorB | MFS | MgrA, NorG | Fluoroquinolones (e.g. hydrophilic: ciprofloxacin, norfloxacin and hydrophobic: moxifloxacin, sparfloxacin)TetracyclineQACs (e.g. tetraphenylphosphonium, cetrimide)Dyes (e.g. ethidium bromide) |

[31] |

| NorC | MFS | MgrA(?), NorG | Fluoroquinolones (e.g. hydrophilic: ciprofloxacin and hydrophobic: moxifloxacin)Dyes (e.g. rhodamine) | [35,36] |

| MepA | MATE | MepR | Fluoroquinolones (e.g. hydrophilic: ciprofloxacin, norfloxacin and hydrophobic: moxifloxacin, sparfloxacin)Glycylcyclines (e.g. tigecycline)QACs (e.g. tetraphenylphosphonium, cetrimide, benzalkonium chloride)Dyes (e.g. ethidium bromide) |

[37,38] |

| MdeA | MFS | n.i. | Hydrophilic fluoroquinolones (e.g. ciprofloxacin, norfloxacin)Virginiamycin, novobiocin, mupirocin, fusidic acid

QACs (e.g. tetraphenylphosphonium, benzalkonium chloride, dequalinium) Dyes (e.g. ethidium bromide) |

[39,40] |

| SepA | n.d. | n.i. | QACs (e.g. benzalkonium chloride)Biguanidines (e.g. chlorhexidine)

Dyes (e.g. acriflavine) |

[41] |

| SdrM | MFS | n.i. | Hydrophilic fluoroquinolones (e.g. norfloxacin)Dyes (e.g. ethidium bromide, acriflavine) | [42] |

| LmrS | MFS | n.i. | Oxazolidinone (linezolid)Phenicols (e.g. choramphenicol, florfenicol)

Trimethoprim, erythromycin, kanamycin, fusidic acid QACs (e.g. tetraphenylphosphonium) Detergents (e.g. sodium docecyl sulphate) Dyes (e.g. ethidium bromide) |

[43] |

Plasmid-encoded Efflux Systems

| QacA | MFS | QacR | QACs (e.g. tetraphenylphosphonium, benzalkonium chloride, dequalinium)Biguanidines (e.g. chlorhexidine)

Diamidines (e.g. pentamidine) Dyes (e.g. ethidium bromide, rhodamine, acriflavine) |

[45,49] |

| QacB | MFS | QacR | QACs (e.g. tetraphenylphosphonium, benzalkonium chloride)Dyes (e.g. ethidium bromide, rhodamine, acriflavine) | [53] |

| Smr | SMR | n.i. | QACs (e.g. benzalkonium chloride, cetrimide)Dyes (e.g. ethidium bromide) | [58,61] |

| QacG | SMR | n.i. | QACs (e.g. benzalkonium chloride, cetyltrymethylammonium)Dyes (e.g. ethidium bromide) | [67] |

| QacH | SMR | n.i. | QACs (e.g. benzalkonium chloride, cetyltrymethylammonium)Dyes (e.g. ethidium bromide) | [68] |

| QacJ | SMR | n.i. | QACs (e.g. benzalkonium chloride, cetyltrymethylammonium)Dyes (e.g. ethidium bromide) | [69] |

a n.d.: The family of transporters to which SepA belongs is not elucidated to date.

b n.i.: The transporter has no regulator identified to date.

QACs: quaternary ammonium compounds

The importance of efflux pumps in bacterial antibiotic resistance

- A. Webber and L. J. V. Piddock

Journal of Antimicrobial Chemotherapy (2003) 51, 9–11

http://dx.doi.org:/10.1093/jac/dkg050Efflux pumps are transport proteins involved in the extrusion of toxic substrates (including virtually all classes of clinically relevant antibiotics) from within cells into the external environment. These proteins are found in both Gram-positive and -negative bacteria as well as in eukaryotic organisms. Pumps may be specific for one substrate or may transport a range of structurally dissimilar compounds (including antibiotics of multiple classes); such pumps can be associated with multiple drug resistance (MDR). In the prokaryotic kingdom there are five major families of efflux transporter: MF (major facilitator), MATE (multidrug and toxic efflux), RND (resistance-nodulation-division), SMR (small multidrug resistance) and ABC (ATP binding cassette). All these systems utilize the proton motive force as an energy source. Advances in DNA technology have led to the identification of members of the above families. Transporters that efflux multiple substrates, including antibiotics, have not evolved in response to the stresses of the antibiotic era. All bacterial genomes studied contain efflux pumps that indicate their ancestral origins. It has been estimated that ∼5–10% of all bacterial genes are involved in transport and a large proportion of these encode efflux pumps.

Multidrug-resistance efflux pumps — not just for resistance

Laura J. V. Piddock

Nature Reviews | Microbiology | Aug 2006; 4: 629

It is well established that multidrug-resistance efflux pumps encoded by bacteria can confer clinically relevant resistance to antibiotics. It is now understood that these efflux pumps also have a physiological role(s). They can confer resistance to natural substances produced by the host, including bile, hormones and host defense molecules. In addition, some efflux pumps of the resistance nodulation division (RND) family have been shown to have a role in the colonization and the persistence of bacteria in the host. Here, I present the accumulating evidence that multidrug-resistance efflux pumps have roles in bacterial pathogenicity and propose that these pumps therefore have greater clinical relevance than is usually attributed to them.