George A. Miller, a Pioneer in Cognitive Psychology, Is Dead at 92

Larry H. Bernstein, MD, FCAP, Curator

Leaders in Pharmaceutical Intelligence

Series E. 2; 5.10

5.10 George A. Miller, a Pioneer in Cognitive Psychology, Is Dead at 92

By PAUL VITELLOAUG. 1, 2012

Miller started his education focusing on speech and language and published papers on these topics, focusing on mathematical, computational and psychological aspects of the field. He started his career at a time when the reigning theory in psychology was behaviorism, which eschewed any attempt to study mental processes and focused only on observable behavior. Working mostly at Harvard University, MIT and Princeton University, Miller introduced experimental techniques to study the psychology of mental processes, by linking the new field of cognitive psychology to the broader area of cognitive science, including computation theory and linguistics. He collaborated and co-authored work with other figures in cognitive science and psycholinguistics, such as Noam Chomsky. For moving psychology into the realm of mental processes and for aligning that move with information theory, computation theory, and linguistics, Miller is considered one of the great twentieth-century psychologists. A Review of General Psychology survey, published in 2002, ranked Miller as the 20th most cited psychologist of that era.[2]

Remembering George A. Miller

The human mind works a lot like a computer: It collects, saves, modifies, and retrieves information. George A. Miller, one of the founders of cognitive psychology, was a pioneer who recognized that the human mind can be understood using an information-processing model. His insights helped move psychological research beyond behaviorist methods that dominated the field through the 1950s. In 1991, he was awarded the National Medal of Science for his significant contributions to our understanding of the human mind.

Working memory

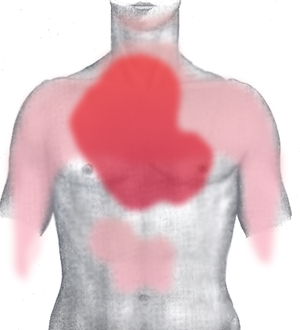

From the days of William James, psychologists had the idea memory consisted of short-term and long-term memory. While short-term memory was expected to be limited, its exact limits were not known. In 1956, Miller would quantify its capacity limit in the paper “The magical number seven, plus or minus two”. He tested immediate memory via tasks such as asking a person to repeat a set of digits presented; absolute judgment by presenting a stimulus and a label, and asking them to recall the label later; and span of attention by asking them to count things in a group of more than a few items quickly. For all three cases, Miller found the average limit to be seven items. He had mixed feelings about the focus on his work on the exact number seven for quantifying short-term memory, and felt it had been misquoted often. He stated, introducing the paper on the research for the first time, that he was being persecuted by an integer.[1] Miller also found humans remembered chunks of information, interrelating bits using some scheme, and the limit applied to chunks. Miller himself saw no relationship among the disparate tasks of immediate memory and absolute judgment, but lumped them to fill a one-hour presentation. The results influenced the budding field of cognitive psychology.[15]

WordNet

For many years starting from 1986, Miller directed the development of WordNet, a large computer-readable electronic reference usable in applications such as search engines.[12] Wordnet is a dictionary of words showing their linkages by meaning. Its fundamental building block is a synset, which is a collection of synonyms representing a concept or idea. Words can be in multiple synsets. The entire class of synsets is grouped into nouns, verbs, adjectives and adverbs separately, with links existing only within these four major groups but not between them. Going beyond a thesaurus, WordNet also included inter-word relationships such as part/whole relationships and hierarchies of inclusion.[16] Miller and colleagues had planned the tool to test psycholinguistic theories on how humans use and understand words.[17] Miller also later worked closely with the developers at Simpli.com Inc., on a meaning-based keyword search engine based on WordNet.[18]

Language psychology and computation

Miller is considered one of the founders of psycholinguistics, which links language and cognition in psychology, to analyze how people use and create language.[1] His 1951 book Language and Communication is considered seminal in the field.[5] His later book, The Science of Words (1991) also focused on language psychology.[19] He published papers along with Noam Chomsky on the mathematics and computational aspects of language and its syntax, two new areas of study.[20][21][22] Miller also researched how people understood words and sentences, the same problem faced by artificial speech-recognition technology. The book Plans and the Structure of Behavior (1960), written with Eugene Galanter and Karl H. Pribram, explored how humans plan and act, trying to extrapolate this to how a robot could be programmed to plan and do things.[1] Miller is also known for coining Miller’s Law: “In order to understand what another person is saying, you must assume it is true and try to imagine what it could be true of”.[23]

Language and Communication, 1951[edit]

Miller’s Language and Communication was one of the first significant texts in the study of language behavior. The book was a scientific study of language, emphasizing quantitative data, and was based on the mathematical model of Claude Shannon‘s information theory.[24] It used a probabilistic model imposed on a learning-by-association scheme borrowed from behaviorism, with Miller not yet attached to a pure cognitive perspective.[25] The first part of the book reviewed information theory, the physiology and acoustics of phonetics, speech recognition and comprehension, and statistical techniques to analyze language.[24]The focus was more on speech generation than recognition.[25] The second part had the psychology: idiosyncratic differences across people in language use; developmental linguistics; the structure of word associations in people; use of symbolism in language; and social aspects of language use.[24]

Reviewing the book, Charles E. Osgood classified the book as a graduate-level text based more on objective facts than on theoretical constructs. He thought the book was verbose on some topics and too brief on others not directly related to the author’s expertise area. He was also critical of Miller’s use of simple, Skinnerian single-stage stimulus-response learning to explain human language acquisition and use. This approach, per Osgood, made it impossible to analyze the concept of meaning, and the idea of language consisting of representational signs. He did find the book objective in its emphasis on facts over theory, and depicting clearly application of information theory to psychology.[24]

Plans and the Structure of Behavior, 1960[edit]

In Plans and the Structure of Behavior, Miller and his co-authors tried to explain through an artificial-intelligence computational perspective how animals plan and act.[26] This was a radical break from behaviorism which explained behavior as a set or sequence of stimulus-response actions. The authors introduced a planning element controlling such actions.[27] They saw all plans as being executed based on input using a stored or inherited information of the environment (called the image), and using a strategy called test-operate-test-exit (TOTE). The image was essentially a stored memory of all past context, akin to Tolman‘scognitive map. The TOTE strategy, in its initial test phase, compared the input against the image; if there was incongruity the operate function attempted to reduce it. This cycle would be repeated till the incongruity vanished, and then the exit function would be invoked, passing control to another TOTE unit in a hierarchically arranged scheme.[26]

Peter Milner, in a review in the Canadian Journal of Psychology, noted the book was short on concrete details on implementing the TOTE strategy. He also critically viewed the book as not being able to tie its model to details from neurophysiology at a molecular level. Per him, the book covered only the brain at the gross level of lesion studies, showing that some of its regions could possibly implement some TOTE strategies, without giving a reader an indication as to how the region could implement the strategy.[26]

The Psychology of Communication, 1967[edit]

Miller’s 1967 work, The Psychology of Communication, was a collection of seven previously published articles. The first “Information and Memory” dealt with chunking, presenting the idea of separating physical length (the number of items presented to be learned) and psychological length (the number of ideas the recipient manages to categorize and summarize the items with). Capacity of short-term memory was measured in units of psychological length, arguing against a pure behaviorist interpretation since meaning of items, beyond reinforcement and punishment, was central to psychological length.[28]

The second essay was the paper on magical number seven. The third, ‘The human link in communication systems,’ used information theory and its idea of channel capacity to analyze human perception bandwidth. The essay concluded how much of what impinges on us we can absorb as knowledge was limited, for each property of the stimulus, to a handful of items.[28] The paper on “Psycholinguists” described how effort in both speaking or understanding a sentence was related to how much of self-reference to similar-structures-present-inside was there when the sentence was broken down into clauses and phrases.[29] The book, in general, used the Chomskian view of seeing language rules of grammar as having a biological basis—disproving the simple behaviorist idea that language performance improved with reinforcement—and using the tools of information and computation to place hypotheses on a sound theoretical framework and to analyze data practically and efficiently. Miller specifically addressed experimental data refuting the behaviorist framework at concept level in the field of language and cognition. He noted this only qualified behaviorism at the level of cognition, and did not overthrow it in other spheres of psychology.[28]

https://en.wikipedia.org/wiki/George_Armitage_Miller